Custom High-Speed Data Acquisition Systems

with LabVIEW using NI Hardware – DAQ Equipment

Over 2,000 custom data acquisition systems with LabVIEW delivered.

Do you need some of these?

Automated data acquisition systems

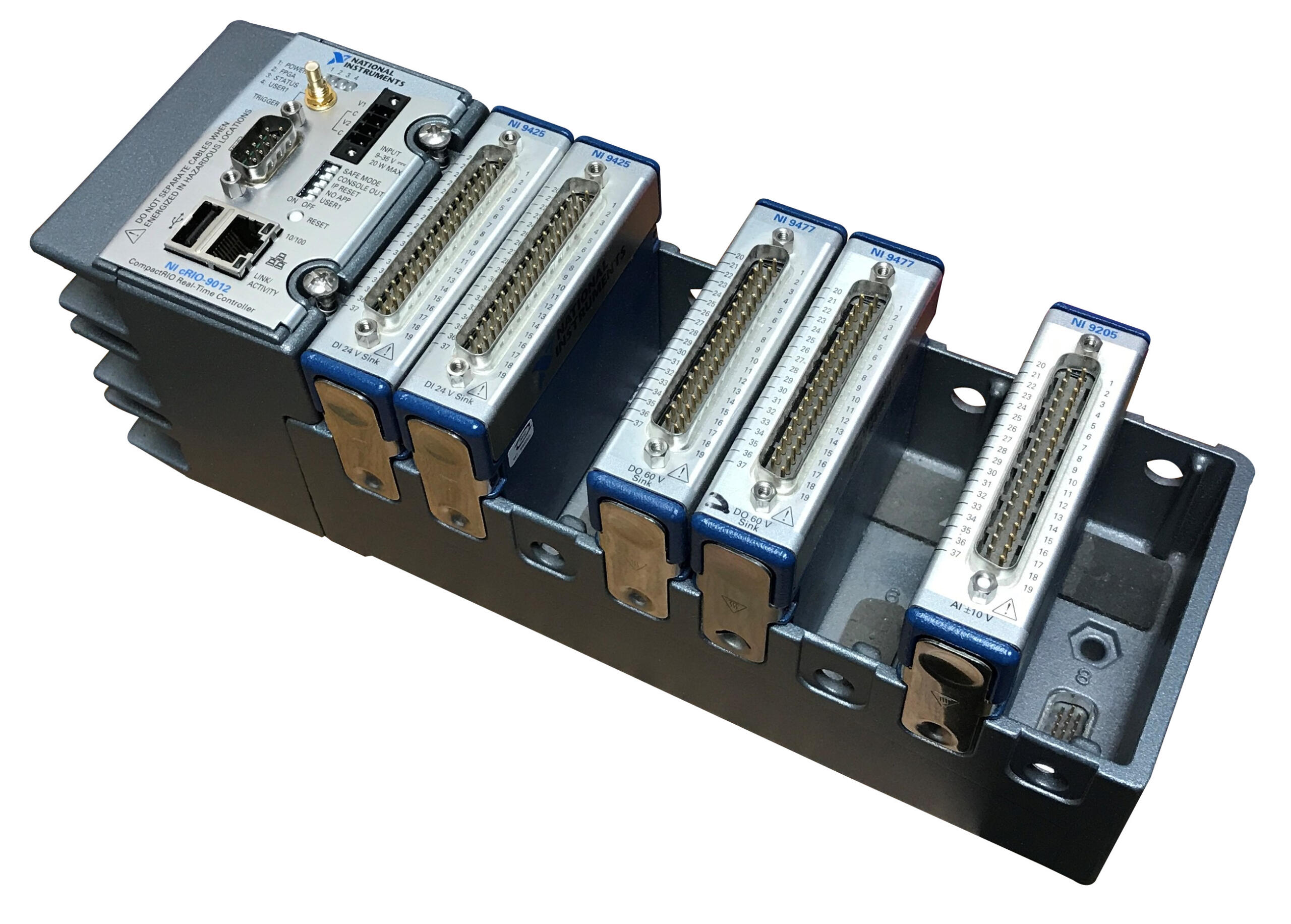

Rugged data acquisition systems – utilizing off-the-shelf hardware such as the NI CompactRIO

High speed data acquisition systems – acquire multiple channels at >1 MS/s

Stand alone data acquisition systems – headless operation acquiring data for days, weeks, or months

Analog data acquisition systems – acquire all sorts of analog data, including vibration, pressure, temperature, current, load, force, voltage, distance, humidity, and magnetic fields.

Digital data acquisition systems – 100s of MB/s to >1 GB/s

Data acquisition and control systems – sometimes you need to react immediately to the acquired data, such as with a rotational encoder fed to a PID control loop to control an actuator.

Need a custom data acquisition system?

We’ve helped teams at some of the world’s most innovative companies

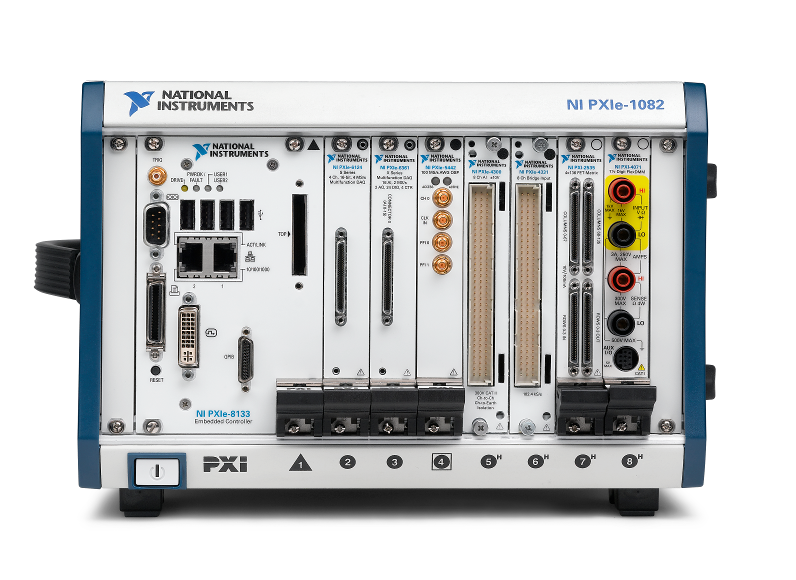

Utilizing National Instruments hardware for data acquisition, including PXI, cRIO and cDAQ. We’ve delivered over 500 cRIO-based systems, and over 1,500 PXI-based solutions.

Want more proof points? Check out these data acquisition case studies:

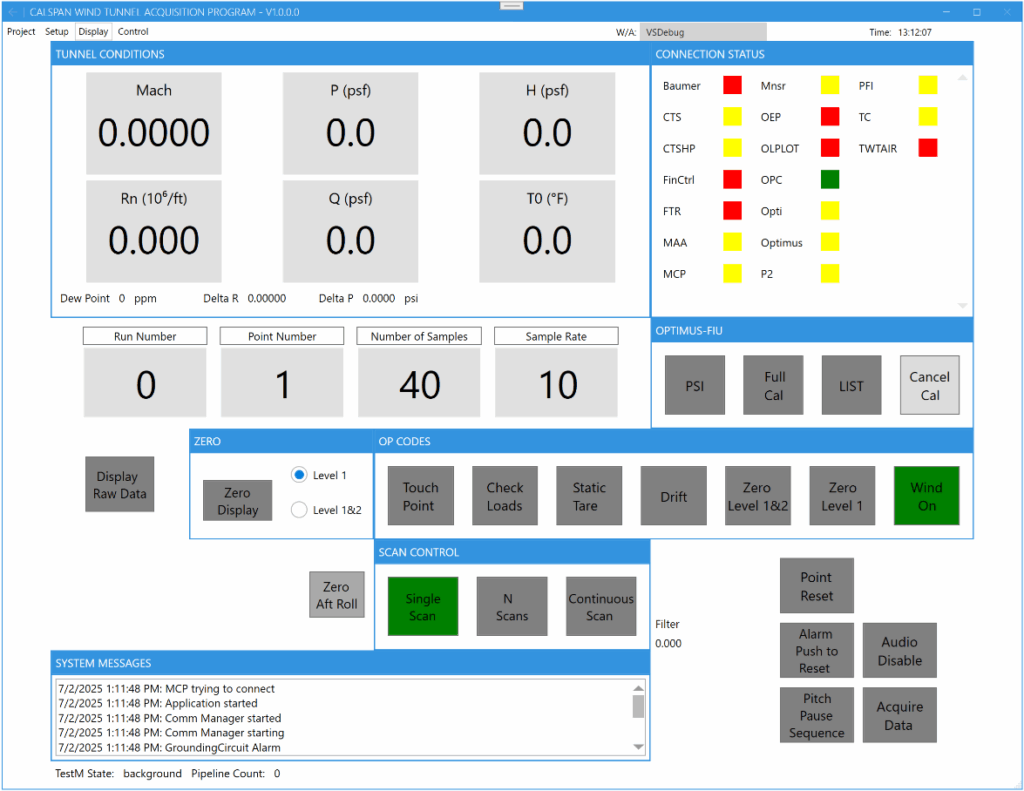

Transonic Wind Tunnel Test System Upgrade

Transonic Wind Tunnel Test System Upgrade

Improved Reliability, Reduced Stress, and New Tools People Want to Use.

Client – Calspan

(tunnel image courtesy of Calspan)

Challenge

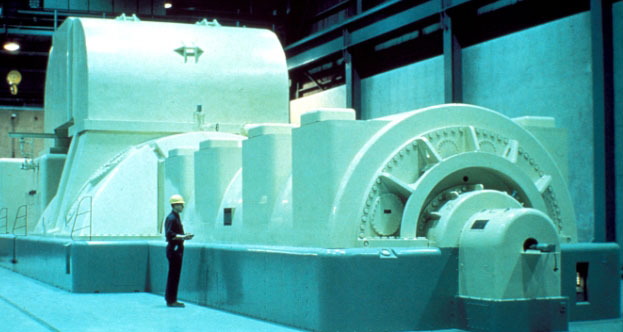

Calspan operates a transonic wind tunnel capable of continuous Mach-speed testing a wide range of aircraft designs.

The overall test system was comprised of three subsystems that were all state of art at the time of design, over 20 years ago, but components were either obsolete or soon becoming so.

Calspan contacted us, and others in a competitive bid, to design, reuse, replace, and build a new test stand to maintain and even upgrade the test capabilities. At a high level, the requirements were to provide similar signal conditioning, hardware functionality, and software user experience.

Working together

Collaboration between our teams was key to success for this upgraded test system.

From the start, we all agreed that we’d have to do some reverse engineering to extract the details and nuances of the existing test system. This task was accomplished by reviewing existing items such as documentation and C++ code. As anyone who has done obsolescence upgrades knows, sometimes the source code is the best source for system requirements due to the wealth of details inherent in source code. Also, some aspects of system operation could not be easily documented, and Calspan personnel helped with defining the desired user interface and user experience.

The reverse engineering effort was enlightening and critical to assure that we all knew where we were headed.

Some highlights of design topics on which we collaborated:

Must-have existing features – Defining features of the existing system that were required in the upgraded system. For example, keep the user experience as similar as possible so operators would be familiar with operation. And, keep the output data file formats the same, so downstream processing apps would still work.

Improved functionality – Ways to grow capability using the better functionality that the newer hardware and software offer as compared to the old subsystems.

Efficient data processing – Recognition of the portions of the software that had to execute quickly and efficiently perhaps at the expense of a “fancy” architecture.

Improved UX – Options for combining the user screens from the three subsystems into one app.

Acceptance Test – Defining the Acceptance Testing method for the upgraded system.

Software development approach – Consulting on software development and maintenance practices, such as using object-oriented design and source code control to ease collaboration.

The balance between full automation and manual operation was also discussed during initial collaboration. Calspan felt more comfortable with, and was familiar with, operating in a semi-manual mode, where walking through a test sequence was best handled semi-automatically with a set of operators at their stations. Nevertheless, we were able to suggest some improvements to the system useability and extensibility. For example:

- Adding better visual feedback on certain conditions via plots and graphs to indicate an imminent alarm situation.

- Performance monitoring for operational aspects like the onset of flutter at certain positions and loads on the aircraft model.

- Modern code design and dev ops to allow Calspan to customize as desired.

Overall, we followed an iterative, Agile-like approach to design while using the technical details of the existing hardware and source code from the three subsystems to dictate the requirements necessary to reproduce, and even extend, the existing functionality.

Solution

The data acquisition part of the test system was implemented in two parts:

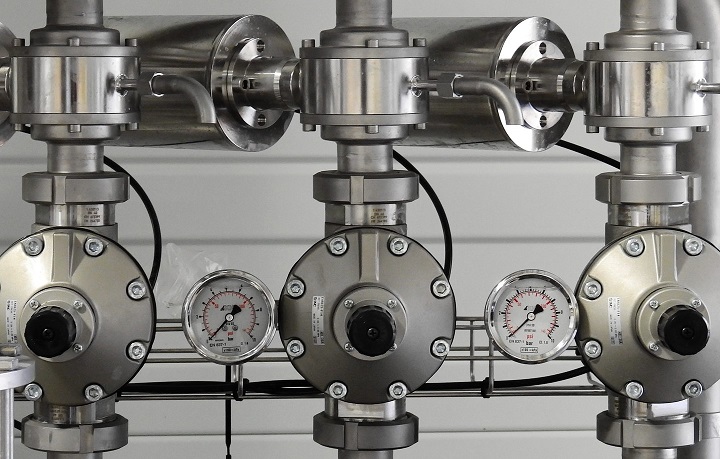

- a Precision Filters, Inc. (PFI) signal conditioning front end and,

- a National Instruments PXIe data acquisition chassis and modules. The PXIe chassis is connected to a server-class PC with MXI to handle all the acquired data and communication messaging between this test system and other special instruments and subsystems.

The signal conditioning was implemented with 128 channels of Precision Filters, Inc. 28124 transducer conditioner. This hardware is the only equipment on the market that met the needs of the client due to its capabilities for voltage and current excitation and a slew of gain and analog filtering options. PFI equipment is used extensively in wind tunnel and other critical measurement applications where performance, stability, and reliability are crucial, so the fit made a lot of sense. Also worth noting, although PFI offers an API to their chassis to enable automated or interfaced calibration, we chose to use their native calibration application because it just works and our interface would have been just a thin shell talking to their software.

The PXIe data acquisition is comprised of 128 channels of 24-bit, 200 kS/s digitizer analog input and additional 24-bit, 5 kS/s digitizer analog inputs. The PXI equipment is connected to a server-class PC via an MXI interface. The MXI interface offered the required bandwidth, and the server-class PC had plenty of processing power to manage the data flow from A/D to file storage while tending to the user displays. This arrangement is capable of simultaneous processing, alarming, visualization, and storage to disk at full rate.

Software was implemented almost entirely using Visual Studio C# and .NET 8.0. A small, but important, signal transformation portion was developed in LabVIEW Real Time on a Compact RIO.

User interface, test and sensor configuration, data acquisition, data processing, data storage, force balance calibration and alarming are all implemented in .NET.

Another overall goal was Calspan’s desire to maintain the application software. We tackled this goal in two ways:

- First, the original software spanned 4 code bases: 3 for the 3 subsystems mentioned above and 1 for calibration measurements. Not an ideal arrangement for software maintenance. When we rewrote in C#, the code base was refactored so that common software classes could be shared, such as data acquisition functionality.

- Second, it is very typical that Calspan’s customers have some unique measurement requirement such as specific sensor selection or additional measurement or control equipment. These requests are more easily accommodated by Calspan now, as compared to the previous system, because the refactoring and combining of the apps simplifies code edits.

Benefits

The main benefits of this obsolete test system upgrade were:

- Modern and dependable equipment performance

- Upgraded test system capabilities

- Simplified usability

- Improved test system maintainability

- Ability to reconfigure and/or add functionality easier than prior system

Also important to mention is the benefit of working with a collaborative team. The existing system, composed of the multiple subsystems, was complex with many subtleties. As with many older systems, the existing documentation was missing some important requirements – it’s just plain hard writing requirements. Without Calspan and Viewpoint having an open dialog and willingness to spend the time to understand options and make appropriate tradeoffs, the benefits of this upgrade project would not have been realized as cleanly. The collaboration was especially important in the design and acceptance testing phases of the project.

With this upgrade, Calspan has a reliable and flexible testing platform.

The previous hardware was degrading and, while not preventing tests from proceeding, channels were increasingly unusable as they failed. The replacement data acquisition hardware upgrades the previous test system with 128 high-speed channels, up from 96, and adds 32 low-speed channels. Previously, the test system acquired these high-speed channels at 100 kS/s per channel. Now the high-speed channels can be acquired at 200 kS/s per channel. All channels are now at 24-bit resolution up from 16-bit, vastly improving dynamic range. Finally, the fast- and low-speed channels can be combined into 160 channels of low-speed data with alarms (via real-time signal processing).

This new and modernized wind tunnel test system also brings faster test configuration. Calspan noted to us that two of the biggest improvements for a test setup configuration are having software-selectable filtering (i.e., not needing to change hardware modules) and having integrated multi-rate data acquisition (i.e., without having to patch in and set up separate acquisition hardware). Calspan estimates saving 2 to 4 hours per test setup.

Moving forward, Calspan will be able to satisfy their customer’s needs with this improved test system performance and the ability to reconfigure or expand functionality for future programs.

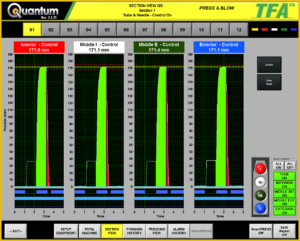

System Overview

| SOFTWARE FUNCTIONS |

|---|

| Channel configuration |

| Alarming |

| Real-time graphs |

| Calibration for 6-axis and 1-axis sensors |

| Data storage |

| HARDWARE USED |

|---|

| Dual 19″ rack test stand |

| Precision Filters 28124 modules, 28000 chassis, and associated connectivity hardware |

| NI PXIe modules and chassis for high-speed AI |

| NI cDAQ and cRIO for AIO and DIO |

| Server-class PC |

Selecting replacement hardware for an obsolete test system

Selecting replacement hardware for an obsolete test system

A disaster hits – repair to meet schedule, and then upgrade after

Client – A major aerospace company

Challenge

Our client’s design validation test system was about 10 years old. They realized that the NI hardware in the test system was soon to be obsolete and they would have to upgrade it someday.

That day came sooner than expected when an issue in the test cell room caused excessive temperatures and smoke, overheating some PXI hardware and other components in the test rack. The test system had to be upgraded. (Everyone was OK – the issue was handled quickly.)

The timing of this event was especially unfortunate, as they were in the middle of some testing that needed to be completed to meet a schedule so that they didn’t hold up the design team.

So, the client made the decision to replace the existing PXI modules with the same type, rather than upgrading directly – that upgrade would have to come later. But, even after replacing the failed modules with the same part numbers, something wasn’t correct since the system didn’t work.

Working toward a solution together

Our client contacted us for help get them past this hurdle.

It turned out that the software for the original system was developed by a one-man system integrator with whom we occasionally collaborate. Our client asked that integrator to help, but he was unable to respond rapidly, so we worked with this integrator to pick his brain about possible causes, but no obvious solution was forthcoming.

We dug into the issue, which was a timing error, and discovered that one of the replacement boards needed a hardware driver update, even though the catalog part number was the same as the module being replaced.

This troubleshooting was best suited for someone with intimate knowledge of NI hardware – it took longer to figure out the issue than it did to fix it.

With that issue resolved, and after our client performed their tests, they asked us to start designing a test system to replace the soon-to-be obsolete hardware with new hardware.

Replacement with new hardware was not a one-to-one swap, making this design a perfect fit for our Hardware Selection Services consulting.

We took the details and requirements of the existing test system and figured out the replacement hardware. We needed to consider channel counts, acquisition rates, and costs. For example, the Sound and Vibration card in the original system had 8 channels but a replacement only had either 4 or 6 channels. Other tradeoffs were also made.

Our approach for this type of consultation:

- starts with recommendations to the client,

- followed by discussion about pros and cons,

- and ending (maybe after a few iterations) with a replacement system that meets the functionality of the original system. The client even added some additional functionality he’d been wanting for a while.

The cost of this consultation service was covered by purchasing of the hardware from Viewpoint, and, in this instance, that portion of our service offering worked to the client’s advantage because we worked with him to split the order into two parts to help him meet his budget and scheduling needs due to some long-lead items. Specifically, we worked with NI to swap a controller running the latest version of Windows with one that ran the previous version so that we could hit shipping dates 5 weeks earlier than otherwise. Our experience with the NI buying process was critical to rectifying this situation; a typical NI customer wouldn’t have thought to check for this solution.

After the arrival of the new system, we were contracted by the client to rework the software to use the new hardware. Updated drivers were needed, of course, but since the original integrator had given the client the application LabVIEW source code, we could decipher how it operated and were able to determine how to incorporate the new and different channel configurations per card plus supporting the functionality that the additional cards offered. Once the configuration of this new hardware setup was completed, we tested that the application was once again working as expected.

Benefits

Working with an integrator that offers both consulting for selecting new NI hardware coupled with turnkey test system design and build capabilities enabled our client to move from a broken system to a new one in stages.

This approach enabled them to complete their scheduled tests as quickly as possible while giving them a path to the future based on the new system that we helped them build and deploy.

How we helped

For this project, we mostly helped with the selection and replacement of NI hardware with a little side help from some system integration services to bring the test system application software to full functionality.

System Overview

| SOFTWARE FUNCTIONS |

|---|

| LabVIEW app for data acquisition, display, and storage |

| Configuration of channels through MAX for connection to the LabVIEW app via DAQmx |

| Test sequencing definition for system control via output channels |

| HARDWARE USED – (for replacement system) |

|---|

| NI PXIe controller and chassis |

| NI Sound and Vibration cards |

| NI Timing and sync card |

| NI Thermocouple card |

| NI General analog I/O |

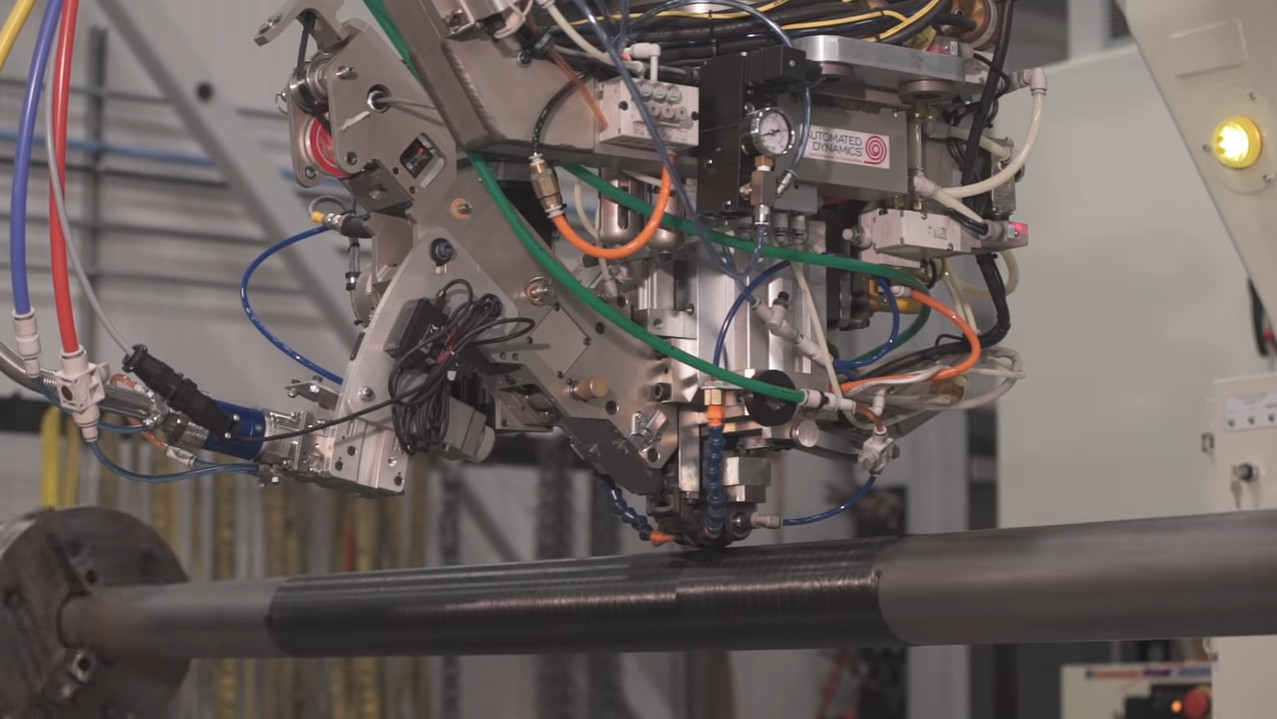

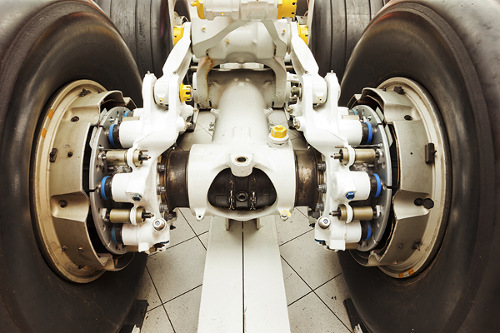

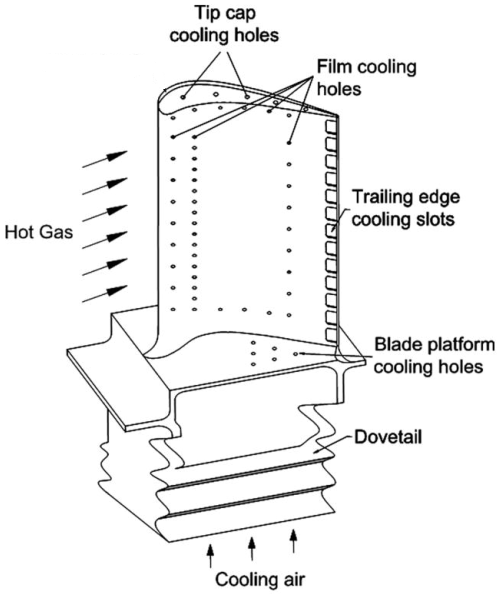

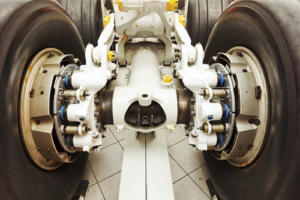

Manufacturing Test System – Aircraft turbine blade quality inspection

Manufacturing Test System – Aircraft turbine blade quality inspection

Improving quality by detecting blocked holes in aircraft turbine blades with an automated test system

Client – Large aerospace company

Challenge

Fuel-fed aircraft turbines run extremely hot. At very high temperatures, a turbine’s blades suffer a shorter life span than if run at cooler temperatures. Consequently, turbine manufacturers put cooling holes in the blades to lower the blade temperature during operation. These holes carry cool air or gas from the blade mount along channels that eventually arrive at holes in the blade surface. This practice keeps the blade much cooler than without the flow.

Some blades have a huge number of holes. Inspecting that each hole is open and has sufficient flow is time-consuming and, if inspected by a person, prone to error due to boredom and distraction. Plus, carpal tunnel syndrome issues are present due to repetitive motions. Multiply these issues by the large number of blades in each turbine and inspection becomes a significant effort.

Our client wanted to implement an automated inspection station to increase throughput and decrease mistakes. Sending defective blades down to the subsequent manufacturing steps is pure manufacturing waste when they are rejected at some later step. Reducing operator injury was also important.

Image courtesy of CC by 4.0 https://creativecommons.org/licenses/by/4.0/ from Zhang, R.; Liu, P.; Zhang, X.; Xi, W.; Liu, J.; “Recent Developments in the Aerodynamic Heat Transfer and Cooling Technology of Gas Turbines Endwalls”. Aerospace 2023, 10, 702. https://doi.org/10.3390/aerospace10080702

Solution

Collaborating with our client, we created a manufacturing inspection system that uses an IR camera and a robot to scan different regions on the blade under inspection. The camera is placed at specific locations and at various viewing orientations to fully inspect all the holes in the blade.

A PC running a LabVIEW test application manages the entire process. Safeties and control of access to the part under inspection are included to protect personnel and product. The system uses hardware sensors and switches and some via FPGA I/O to accomplish this safety monitoring.

Working with our client and our machine builder subcontractor, we helped select the NI hardware best suited for this test system. Our primary focus was on the interface between the IR camera and the system safeties.

Benefits

- The reliability, reproducibility, and speed of the automated inspection augments the operator capabilities

- Improved part quality of parts released to downstream production steps.

- The measurements collected during inspection of each part are available for quantitative analysis of the performance of the manufacturing processes. This post-inspection information helps improve quality all around.

Working Together

This project was the culmination of over 10 years of development effort between our client and Viewpoint engineers.

We started with a basic R&D proof of concept system.

After some tweaks to the process and associated system redesign, we were ready to use a prototype system in a trial run situation where manufactured parts were run manually. This stage gave confidence to those in manufacturing that the system would improve part inspection while speeding up the original manual inspection process. Some changes were made at this stage to decrease costs and improve the test engineer and operator interfaces. It was then placed into the production environment.

Along this entire multi-year development path, the level of automation was increased and the system design was adjusted as we learned from each other about what worked and what didn’t. For example, early in the R&D phase, attention was paid to the analysis algorithms and data required to make those reliable and accurate. And, for the production phase, we changed hardware components to make the test stand hardware less expensive and more robust while reworking the user interface of the software application to be simpler to configure and run.

Both Viewpoint and our client formed a tight team during this journey, with one constant being the Viewpoint engineers involved as the various people on the client’s side changed as this inspection system moved through departments and physical location within our client’s facilities, eventually culminating as a complete test rig with a robot, test stand for data acquisition control, and safety components to protect the operator.

System Overview

The system is composed of the following items:

- Test stand and control hardware cabinet

- LabVIEW test application running on a PC

- Robot and motion controller

- Vision system for IR camera

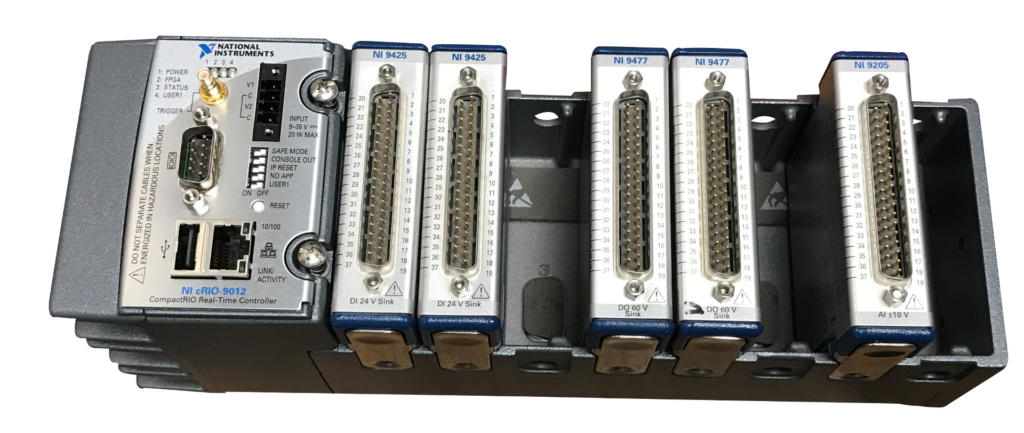

- NI Compact RIO FPGA digital I/O for controls and safeties

- NI Compact RIO analog I/O for equipment status

- Hardwired safeties and switches for operator and equipment protection

The LabVIEW application manages the entire inspection cycle:

- Assuring a part is present in the nest before starting the inspection process,

- Downloading the part-specific motion profile to the robot,

- Acquiring the images from the IR camera,

- Analyzing the images,

- Compiling the results, and

- Preparing a test report for the part.

Simultaneous with this cycle, the application checks digital and analog I/O for machine status and errors.

If a part model has not been inspected before, the LabVIEW application is used to create the motion profile for that part model.

When the test on a specific part is started, that motion profile is downloaded to the motion controller based on the part type as selected in the application.

After testing a part, the operator is presented with the test results where the option to accept, retest, or reject is offered. The operator is integral to this automated test. In any case, the test data is archived.

When the test is completed, the operator unloads the part for final disposition and loads the next part to inspect.

The LabVIEW application also has a diagnostic mode and configuration editor which is used by the operator or manufacturing engineer to evaluate and tune the inspection process.

The robot movement is managed by a motion controller based on the motion profile created via the LabVIEW application. The PC-based image acquisition is done when the motion controller alerts the LabVIEW application that the part is oriented and positioned within the field of view of the camera. Images are acquired for each of the positions defined in the motion profile.

Safety for both the operator and the part is handled in two ways. First, the Compact RIO FPGA checks system status via digital and analog inputs. Second, hardwired safety sensors and switches assure that the operator is out of the way when the robot starts moving and that the part is in the nest ready for inspection. An example of these “hard” sensors is a light curtain which will cause the system to stop if the curtain is affected during a test.

| SOFTWARE FUNCTIONS |

|---|

| NI FPGA for control and safeties |

| PC LabVIEW test application |

| System configuration |

| Data management |

| Safeties and machine status |

| HARDWARE USED |

|---|

| Articulated robot |

| Vision camera |

| FPGA digital I/O |

| FPGA analog I/O |

| Hardwired safety circuit for personnel and product protection |

| Touchscreen monitor |

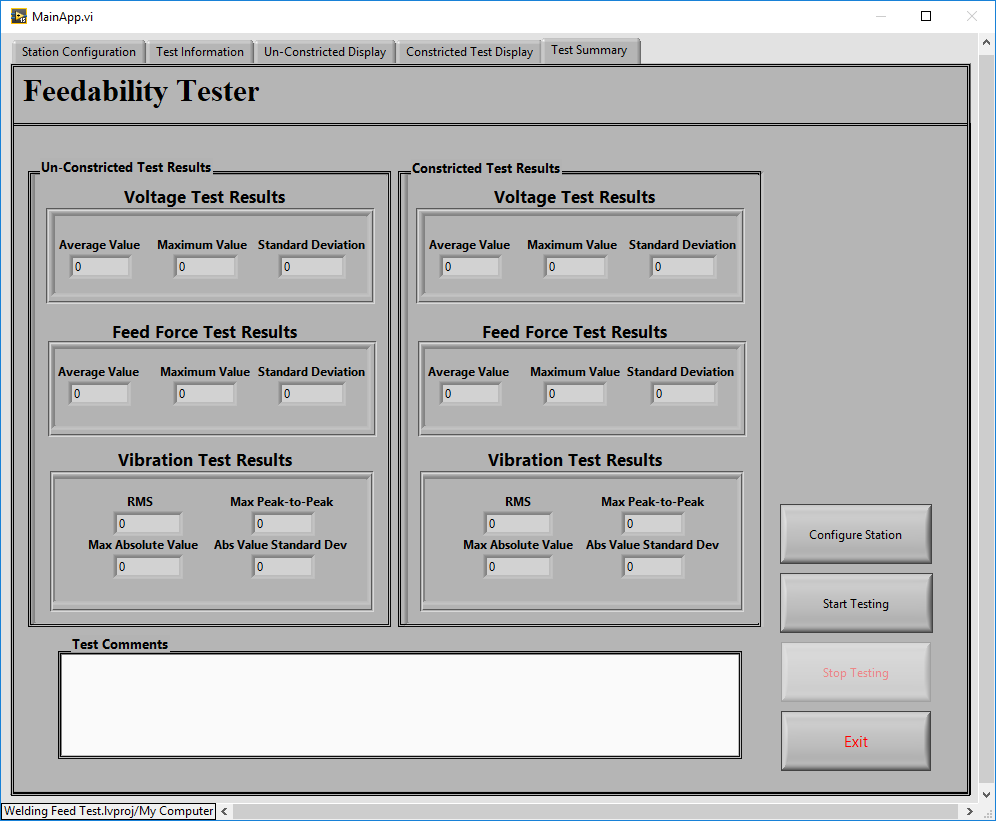

Enhancing an existing test system for ease of maintenance

Enhancing an existing test system for ease of maintenance

Reduction of time and frustration motivates software upgrade of a capable but inflexible test system

Client – A world-wide manufacturer of refrigeration units

Challenge

Our client had been using a 15-year old test system LabVIEW-based application that was becoming difficult to update. Plus, the designs of their newest refrigeration units were more complex than ever, requiring new test steps.

On top of that, our client wanted to give the operator flexibility in sequencing the test steps. For example:

- the operator might want to run a specific test twice to verify operation,

- the operator might want to restart a sequence in the middle after reworking a part, or

- the test engineer might want to add another test step to further clarify operational data for historical trend analysis.

The existing application was based on a state machine architecture. While state machines can be edited to handle different sequence flows, this test application had numerous alterations over the past 15+ years to support the needs for testing new product designs. These amendments compounded over the years into an unwieldy test application.

New products were about to be introduced which would require additional modifications to the existing state machine and subsequent verification that:

- changes worked as planned and

- changes didn’t affect any other existing modes of operation. This need was increasingly daunting.

The Design and Development Process

Very early in discussions, we showed our client a test application based on an object-oriented (OO) software architecture, and its associated user interface, that we had used in previous test system projects for other clients. We thought it might satisfy some of the desires we were hearing about:

- how the older state-machine-based test system was difficult to maintain,

- how some users were frustrated by the inflexibility of the test sequencer, and

- wondering why the system can’t be easier to update for new product requirements.

Moving the existing application over to this new architecture would clearly require more effort than another patch, so we had to decide if the cost for this approach would be justified based on two major benefits:

- Simplify the management and verification of future changes.

- Enable flexible test flows to give the test operator a better user experience.

Both benefits would accumulate cost savings for maintenance and upgrades going forward. After discussions with our client, we jointly decided this approach was justified.

After reviewing this OO approach, our client asked us to use it to develop a small test system for another component of the refrigerator products. This small system gave our client the chance to explore the “look and feel” of this new architecture and user interface design before embarking on the test system discussed in this case study.

That trial project was a success, and we were given the go-ahead. The benefits, as described above, were clear.

After the development was complete, we:

- worked with our client on integrating the test system into the existing test station,

- performed acceptance testing, and

- delivered the final items, like source code, to our client.

Solution

The initial upgrade tasks in creating this new application started by identifying the code for the test steps in the existing test application, which was based on that state-machine architecture, and then rewriting the test step functionality with the OO methodologies discussed earlier.

We also reworked the sequencing of steps to use an OO-based test sequencer. The test sequencer was reused from some of our prior projects.

For each test step in the existing test application, we repurposed the existing LabVIEW code in two ways:

- First, we identified code functionalities that were commonly used throughout the state machine for the purpose of defining a set of reusable step types.

- Second, we converted that common functionality into LabVIEW classes via copy-paste into the class methods, coupled with extraction of the configuration parameters needed to give each class the behavior needed for a particular step.

For example, the existing state machine contained many steps that provided a request-response method over a data bus. These similar steps were corralled into a single class with methods for data communication. Thus, each of the multiple original states in the existing application which requested parameter values could be made by calls to the same class in the new application simply by providing specific configuration inputs to the same class method.

Benefits

This OO design simplified updates to the test steps and their sequencing.

Furthermore, the operator interface was simpler, cleaner, and allowed the operator to manage the flow of the test steps. For example, operators could jump around in the test sequence when needed, say for reviewing the occasional confusing result or helping to develop the production test sequence.

The OO design of this new test system application was aimed squarely at improving the user experiences of both the operator and test engineer. Secondarily, the OO design will help the test system developer by untangling the original state machine code into supportable, extensible, and maintainable software.

Some specific benefits available from this new OO design:

- Reduced frustration – If the operator noted something confusing about the outcome of a test step, that step can be rerun without needing to restart the entire test sequence.

- Improved operational efficiency – The operator and/or test engineer can try a different sequence of test steps for operational or efficiency improvement.

- Faster test system updates – Two aspects make updates faster and cleaner. First, new product designs can be accommodated with less worry about whether the fragile state machine code will break, Second, the code modularity of OO test steps makes it easier to implement new tests.

These usability and maintainability features will save our client cost and schedule in future product upgrades as well as highlight the contributions of the test system on production efficiency.

System Overview

The test hardware was based on NI CompactDAQ and the application was written in LabVIEW. The automated test system provided the following main features:

- Test configuration based on the type of part being tested

- Test sequencing with part-specific test steps

- Test sequence execution can be managed by the operator in real-time

- Display of test results as the sequence progresses

- Archiving of test data for historical tracking

The test flow that this application runs is:

- The operator enters the model and serial numbers (typed in or scanned in).

- The test system looks up the model number and finds the test sequence to run.

- The test system populates the sequencer screen with the appropriate test sequence.

- The operator can select a step from which to start or just click the Start Test button to begin the entire sequence.

- Buttons at the bottom of the sequence display allow the user to Pause, Abort, or Resume the sequence.

- Executed test steps are highlighted in green (pass) or red (fail) to indicate how the sequence is progressing. The operator can scroll through the test sequence to review the outcome of each step.

- If the sequence is configured to do so, the sequence may pause at a failed step so the operator can repair and retest that step.

The unit under test (UUT) was monitored by the test system to view sensors both internal and external to the UUT. The external sensors are used to detect the environment of the unit, such as being in position, connected to power, and so on.

Besides a few thermocouples and digital inputs, measurement data used to determine pass/fail was obtained from the UUT via the data bus.

All these inputs were handled by a set of NI modules in a 4-Slot cDAQ chassis.

| SOFTWARE FUNCTIONS |

|---|

| Data communications |

| Acquire sensor data |

| Control digital output |

| Acquire digital inputs |

| Test sequence management and execution |

| Archiving of all test results |

| HARDWARE USED |

|---|

| 1 Port High Speed Communication Module |

| 8 Channel AI Module |

| 8 Channel Sourcing DO Module |

| 8 Channel Sinking DI Module |

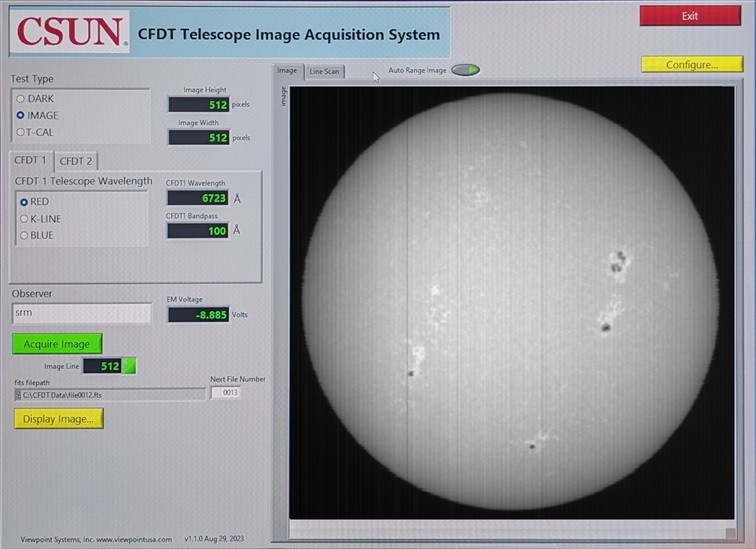

Updating an obsolete LabVIEW-based system for measuring solar irradiance

Updating an obsolete LabVIEW-based system for measuring solar irradiance

Maintaining continuity between old and new systems was important for correlation with nearly 40 years of historical data

Client – California State University at Northridge (CSUN)

Challenge

Our client had over 4 decades of data on solar irradiance (the amount of light our sun emits). Over that decades-long time span, equipment suffers breakdowns and needs repair or replacement. We were called in to replace that old system with an upgraded system.

For this obsolescence upgrade, the solar irradiance measurements, before and after the equipment change-over, must compare to provide continuity in the measurements.

Consequently, our replacement system had to be checked against the existing system before it went completely defunct.

To that end, we needed to continue making the light measurements with the existing linescan photodiode array. This linescan device required some low-level digital signal control and handshaking to initialize and perform the measurement data collection.

Solution

The prior system used obsolete hardware from National Instruments (NI) such as an E-Series card multifunction data acquisition card and a TIO-10 timer-counter card.

Beside being obsolete, this hardware used on old computer bus. The combination of these defunct features was addressed with a new PC and data acquisition hardware.

Furthermore, some support components were also needing upgrade, such as a non-functional power supply.

We also upgraded a circa 2013 LabVIEW application and added some new functionality. Luckily, the CSUN team had the source code.

All these components were delivered as a turnkey system to CSUN.

Benefits

Obsolete measurement system update – enabled essentially unbroken measurements of solar irradiance over the nearly 40 years coupled with some overlapping data collection for comparison of previous and present data gave confidence that the upgraded system could continue to collect important solar irradiance data for many years to come.

The sun’s output does vary cyclically about +/- 0.035% on average following the sunspot cycles about every 11 years. Check out the plot of solar output over the past about 25 years in this link: https://spacemath.gsfc.nasa.gov/sun/Earth8.pdf

How we worked together

CSUN researchers connected with us after they reviewed our capabilities on our website and had a subsequent conversation. Although the CSUN team had a very good technical understanding of the required upgrades, they were not experts in automation systems and were looking for a system integrator for help.

From our perspective, all the details about the operation of the existing system would be in the LabVIEW source code and electric / signal system schematics. As mentioned earlier, CSUN did have the source code. Some older schematics needed a bit more digging by us to identify all the relevant hardware.

Our proposal was based on this system information and one or two clarifying discussions. CSUN accepted and funded our proposal and we began the upgrade effort.

We didn’t have access to some parts of the system, such as the linescan array, so we tested the completed upgrade as much as we could at Viewpoint and then scheduled a trip to CSUN for the final installation and commissioning.

Once on-site at CSUN, the only surprise was a power supply that wasn’t functioning as expected. CSUN replaced that unit while we were on-site. After all the upgraded components were in place, the system was tested and commissioning was completed successfully.

System Overview

CSUN uses two Cartesian Full Disk Telescopes (CFDTs) to measure solar irradiance. These telescopes can measure irradiance at various specific wavelengths of light. Measurements are made daily and compared with space-based measurements.

Specifically, comparisons are made between irradiance measurements obtained from instrumentation on the SORCE satellite with the measurements obtained from CSUN’s ground telescope-based measurements. The space- and ground-based equipment measure different ranges of light wavelengths. The SORCE measurements span a wide range of wavelengths. This satellite was launched in early 2003 and its mission was completed in early 2020 (https://lasp.colorado.edu/sorce/). Note that SORCE measurements do not have to contend with Earth’s atmosphere.

The CSUN equipment measures at a few specific wavelengths. Comparisons between the two methods is important because satellites don’t last forever, necessitating ground-based equipment that is confirmed from space-based measurements. And, of course, ground-based measurements must contend with Earth’s atmosphere, so correction factors must be calculated and confirmed. Assessing the validity of long-terms trends against shorter-term space-based data lends assurance to ground-based equipment measurement. The nearly 40-years of continuous CSUN measurements can proceed into the future with confidence.

The system we deployed replaced the obsolete equipment and software with:

- new measurement hardware,

- a workstation,

- and a failed power supply.

The application software was upgraded as well to:

- bring it up to the current LabVIEW version,

- add some new functionality,

- and Interface the linescan imager with the upgraded USB-based measurement hardware from NI.

| SOFTWARE FUNCTIONS |

|---|

| FITS image creation |

| Control of linescan array |

| Updated application with improved user interface |

| HARDWARE USED |

|---|

| Dell Workstation |

| NI USB Multifunction module |

| Cables, BNC breakout panel, power supplies |

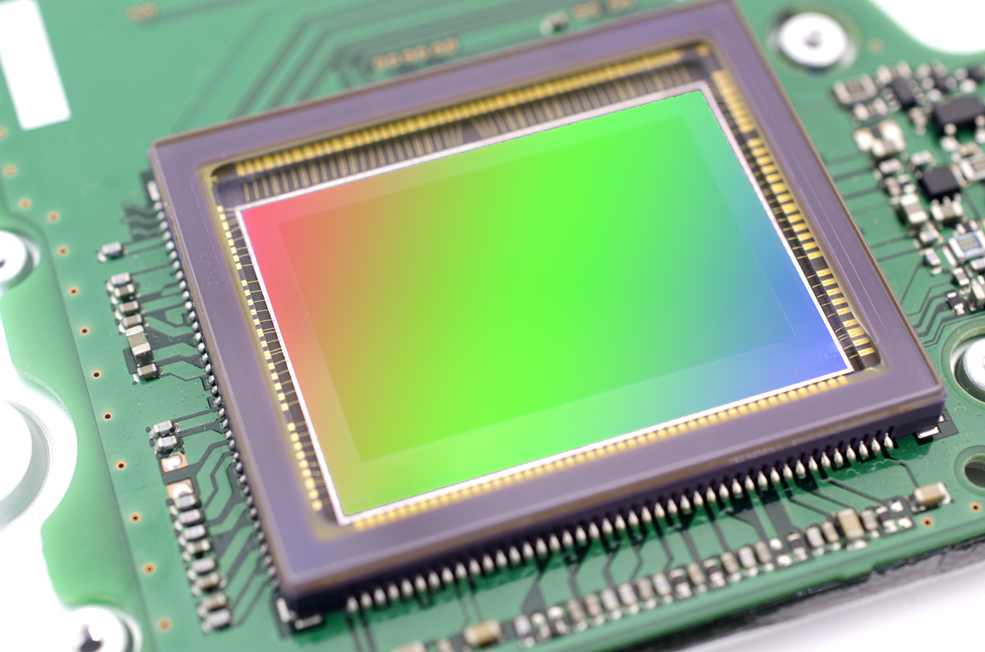

Automated production test of EO/IR imaging subsystem

Automated production test of EO/IR imaging subsystem

Assessing quality of mission-critical electronics for imaging

Increased throughput by automated signal skew adjustment and pixel verification

Client – Worldwide supplier of products for aerospace and defense

Challenge

Our client wanted a test system that would significantly increase production rates for a very specialized focal plane array (FPA) and associated readout integrated circuit (ROIC) electronics.

In broad strokes, the system needed to support the following:

- Increase production test throughput as much as reasonably possible within budget and schedule constraints.

- Provide some low-code or no-code way to create new test protocols.

- Protect the DUT using hardware and software interlocks.

- Verify the correctness of test image(s) and all its pixels.

Solution

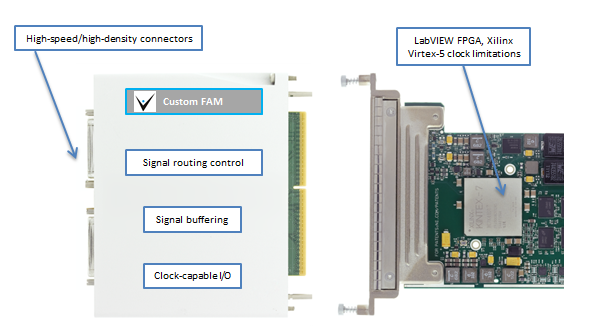

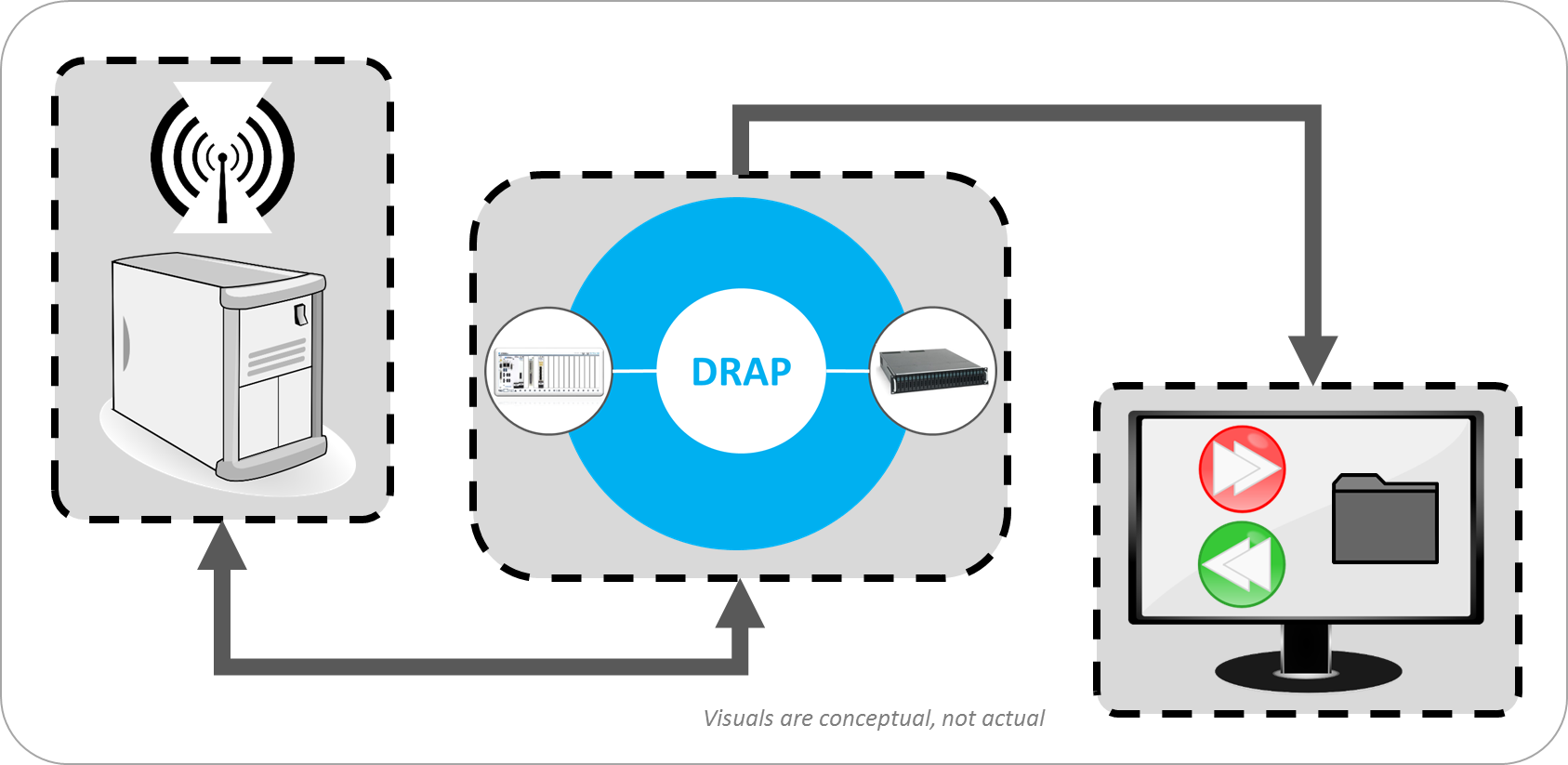

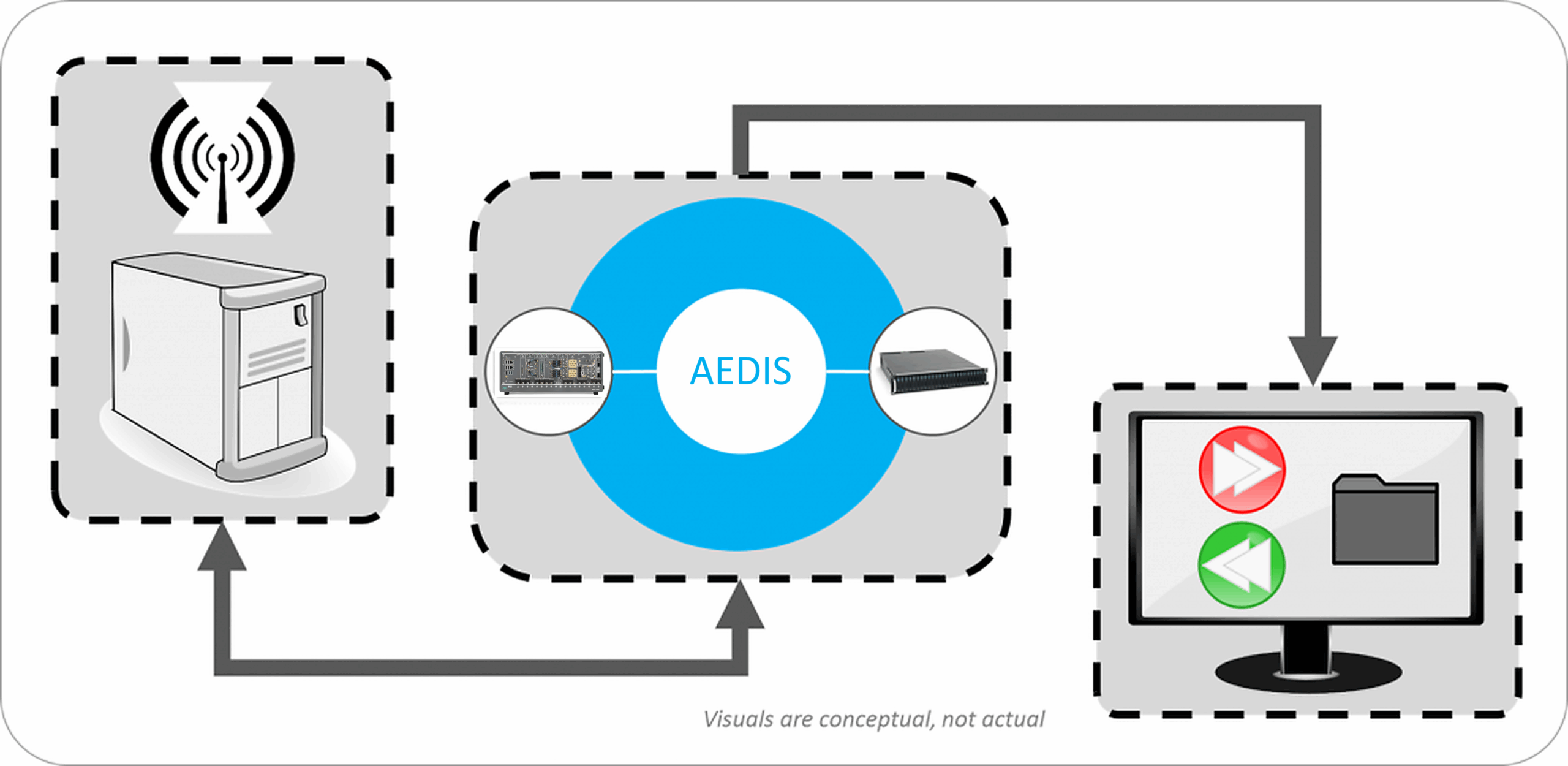

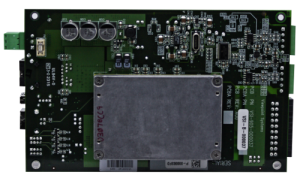

The FPA and ROIC testing for this client used many of the same techniques we have implemented for FPA/ROIC testers at some of our other clients. Thus, the solution was built around our AEDIS platform and some custom connectivity hardware which paired the DUT to the AEDIS hardware.

Specifically, the test needed to:

- Send digital signals from the AEDIS hardware to initiate and coordinate the test steps.

- De-skew bitstreams from the DUT.

- Organize and rearrange the bitstreams into image pixels.

- Provide custom “personality” modules to connect the DUT and AEDIS hardware.

- Protect the DUT from connection and power faults.

Benefits

All the bulleted items above are common requests from our clients and are supplied with the AEDIS platform or easily accommodated by design of the platform. Consequently, AEDIS often meets 80% or even 90% of typical client needs.

Thus, the client was able to cost-justify an AEDIS-based solution for two main reasons:

- overall system costs for an AEDIS-based solution were significantly less than a completely custom system and

- the increased production rates provided plenty of schedule buyback.

Furthermore, the script-based, low-code capabilities offered by AEDIS enabled:

- Support of different test images.

- Control of image transfer initiation, handling responses from the DUT, and flow.

- Version control (by the client) of script-based test configurations.

- Defined parsing of bitstreams to create the image pixels to simplify downstream image collection.

System Overview

The test system was built around AEDIS, which is a combination of five major components:

- NI FlexRIO PXI modules and chassis.

- Signal conditioning hardware.

- A REST API interface for the client’s test sequencer and LabVIEW FPGA for the hardware interfacing.

- An out-of-the-box browser-based app to interact with the AEDIS system.

- A source measure unit to supply and test the DUT’s power needs.

With this design, AEDIS acts as an instrument to incorporate into the client’s overall test system.

The NI FlexRIO modules use Xilinx FPGAs for digital I/O at the rates and channel counts required to fully test the FPA on the DUT. Some digital lines were used for commands to the FPA while most were used to receive output from the FPA.

The AEDIS interfacing hardware acts as an ITA while converting the FPA/ROIC signals to types expected by the FlexRIO. A custom “personality” module provides the physical connectivity from the client’s hardware cabling to the ITA. The AEDIS software handles the test configuration setup, data acquisition, and data storage via high-speed RAID drives.

During development of the test system, AEDIS hardware and software were also used to emulate the actual FPA and electronics to verify, before deployment, that the test system was working as required. This same setup can also be used for periodic verification as might be needed for an annual equipment performance audit.

Finally, configuration files were built from user-created scripts to give the client flexibility for adding new test capabilities for the DUT.

Some of the significant hardware and software challenges mitigated by this combination of PXI FlexRIO and AEDIS are:

- Interface to tester: The AEDIS system is treated as an instrument managed by an overall test system. The client developed some custom C# code to interface to the AEDIS REST API interface to automate their test procedures.

- DUT interfacing: Standard (keyed) cabling ran between the DUT and the custom AEDIS “personality” card to match cabling to the DUT. The output of this personality module went to the AEDIS LVDS modules.

- Channel skew: The high-frequency digital LVDS signals from the DUT can develop noticeable timing skews between channels upon arrival at the FlexRIO inputs due to signal path length differences. The test system had to detect and accommodate for these skews before combining the bits streams into bytes, then pixels, then an image.

- High data rates: Not only were the digital data output at high frequency but many channels were needed to accommodate the full frame rate of the DUT. The FPGA and the PXI backplane needed enough processing and transport bandwidth to accommodate the throughput.

- Interlocks: Keyed cabling and “signal present” software checks assured proper connections between the DUT and the AEDIS hardware before testing would begin. These safety checks were justified due to the high cost of each DUT.

- User-defined scripts: Scripts created and edited by the user provided flexibility to address future test types and system obsolescence. For example, the scripts defined details such as a) the DUT-specific commands (some of which could not be shared with us for secrecy reasons), b) when the image is being captured (or ignored), and c) if the image is stored to disk or RAM.

| SOFTWARE FUNCTIONS |

|---|

| Browser-based app for manual operation and recipe creation and editing |

| REST API interface for support of automated operation |

| Configuration of the test via scripts |

| Pre-test interlock checks |

| Data collection, bit stream processing, and mapping digital bitstream to pixels |

| HARDWARE USED |

|---|

| Standard AEDIS components for signal buffering, conditioning, and signal acquisition |

| Custom personality hardware for connectivity and physical connection interlocks |

| Signal test points in AEDIS breakout ITA modules enabled use of a logic analyzer for troubleshooting and further verification |

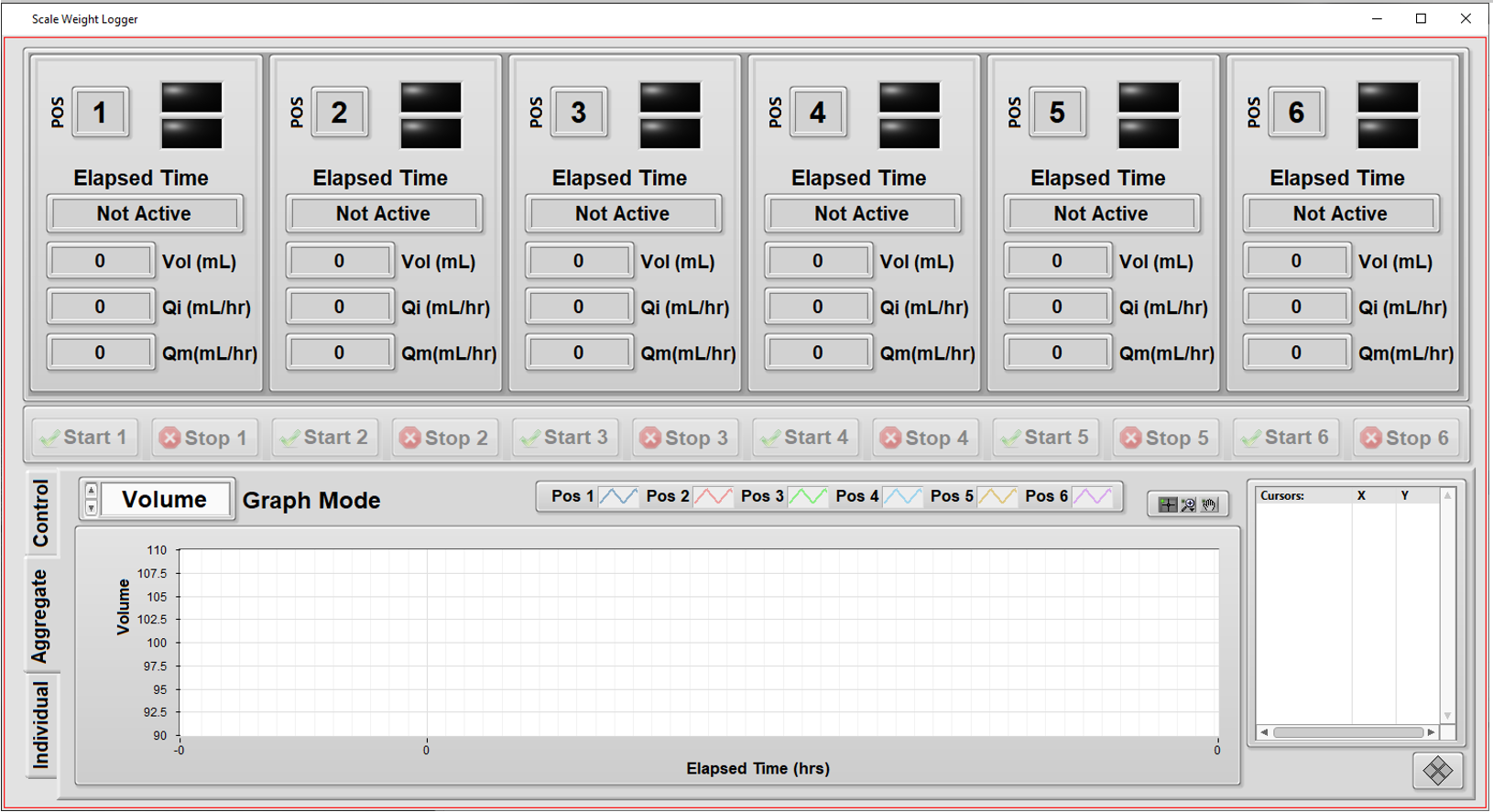

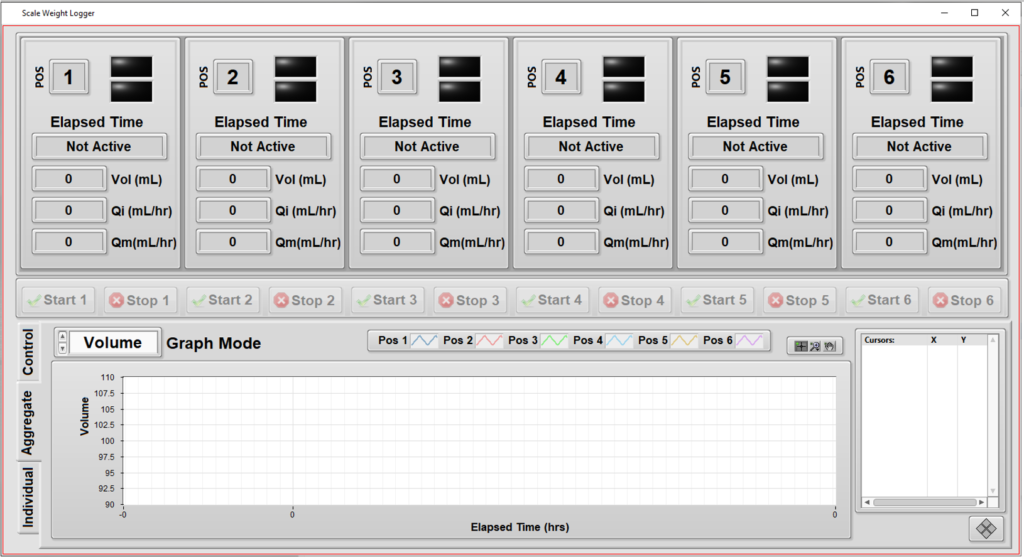

Automated Measurement System – Verifying Flow Rate Performance in a Medical Device

Automated Measurement System – Verifying Flow Rate Performance in a Medical Device

Assessing quality and managing traceability of a medical device

Automation increases throughput by testing multiple units independently

Client

Worldwide supplier of products for surgery

Challenge

Our client wanted to perform detailed quality checks on the performance of some of their fluid dispensing products. These products dispense fluid over long periods of time (hours) so rigorous testing of each unit in production would take too long. But, being medical devices, these products need to follow ISO 28620 standards and FDA 21 CFR Part 820 regulations, so a rigorous test process is helpful to fulfill these needs. The automated test system below is part of that overall fulfilment.

The client came to Viewpoint with the following high-level desires for a system to test the product:

- Support independent configuration and testing for up to 6 units.

- Simplify overall test setup by copying the configuration from one unit to another.

- Handle different volume amounts supported by assorted models.

- Measure from each unit the weight of fluid dispensed as a function of time. (Weight was converted to volume using the fluid density.)

- Compute the “instantaneous” flow rate (volume vs time) as the test progresses.

- Keep track of the calibration status of the weight scales at each of the 6 positions in the test system.

- Enable some measurements to be excluded at the start and end of the run for calculations of average flow rate.

- Provide graphs and metrics of results to enable faster review of the data during the test.

- Add ability to comment on each unit while testing is in progress, and after, and track these for compliance.

- Print (PDF) a report on each unit’s results along with its identifying info, such as subcomponent lot numbers, the test datetime stamp, and the operator’s ID.

Solution

The client had an initial version of the testing application which they developed for testing their various initial design iterations. Viewpoint enhanced this existing application with the features listed above. The motivations for this enhancement were to:

- Automate testing of the initial production units more thoroughly than the existing application allowed.

- Enhance the user experience for easier testing.

A major aspect of the user experience was to support testing of multiple units independently from each other so that unit testing on one device could start/stop while other devices were installed in (or removed from) the tester without interrupting tests on other units.

- This need was especially important since some models might take twice as long to test as other models or the setup of one unit might need additional adjustments before starting. This meant that starting and stopping all at the same time could reduce utilization of the tester, and it made sense to have independent and parallel operation.

- Furthermore, with parallel testing, the tester was not constrained to having a full set of units to begin testing operation, since the tester could run with only 1 unit installed.

The other major enhancements focused on the user experience by offering real-time data of a particular unit’s testing, as well as real-time graphs to show progress. These graphs were useful because the operator could clearly see when a unit was not performing as expected and the test for that unit could be aborted without affecting testing on the other units.

Since this testing needs to follow the requirements in the ISO 28620 standard and 21 CFR Part 820, it was important for the application to be aware of the calibration status of each of the 6 weight scales, one for each position in the test system.

Benefits

The main goal of this project was to augment the test operator’s ability to set up the testing of products while providing real-time visual feedback to the operator about the testing status.

Some of the benefits of this automated test system were:

- The test automation provided consistency resulting from the software-enforced test process.

- Test status via the visual feedback helped increase the efficiency of the operator.

- Enhance the user experience for easier testing.

System Overview

The application was developed in LabVIEW and measurements were made via RS-232 communications to each of the 6 weight scales. Once a unit was installed in the tester and the operator started the test on that unit, the application:

- tared that unit’s scale,

- started the flow,

- and collected weight measurements frequently to build a curve of “instantaneous” flow versus time.

These “instantaneous” flow numbers were used to compute statistics such as maximum flow and average flow over the course of the entire test run.

Some of the primary functionality of this system includes:

- Independent tests: testing a specific unit doesn’t interfere with testing of others.

- Graphs of flow rate during the test execution: visualize the unit’s performance during the hours-long test time, not just at the end.

- Calibration check: the next calibration date of each of the weight scales are maintained in a configuration file, so the operator can check that the test system is ready to run a test at a particular position.

- Different volumes: since each unit is tested independently, the system can handle different volumes for each position in the tester.

- Enhanced commenting: operators can enter comments about each unit (or all of them) and have these comments archived for compliance purposes.

| SOFTWARE FUNCTIONS |

|---|

| Logging of weight versus time for up to 6 test positions via RS-232 communications to scales. |

| Manage the configuration and commenting of the test. |

| Save, recall, and copy configuration information to ease the operator’s setup effort and time. |

| Display graphically the curves of flow versus time while the test progresses. |

| Compute statistics on the flow after test completion. |

| HARDWARE USED |

|---|

| Multiport ethernet to RS-232 serial communications. |

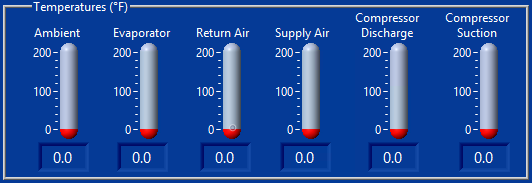

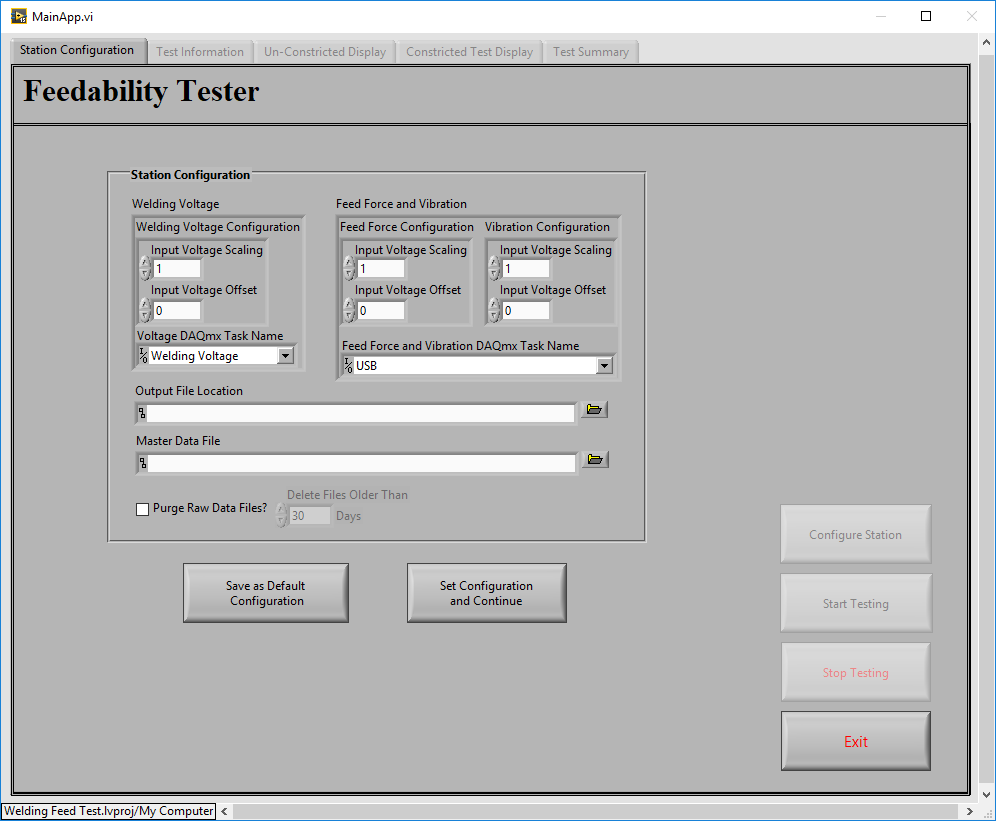

Custom Automated Test System – Quantifying Energy and Durability Performance for Refrigeration

Custom Automated Test System – Quantifying Energy and Durability Performance for Refrigeration

Automation reduces manual labor while improving traceability

Assessing performance for improved energy ratings and longevity

Client – Zero Zone – Commercial refrigeration systems manufacturer

Challenge

Zero Zone wanted to improve the capabilities and durability of their new reach-in refrigeration products.

You might think that refrigeration is a mundane product line, but that is just not true! So many innovations are occurring as manufacturers are redesigning their products to improve their environmental footprint through better energy efficiency, coolants, and durability.

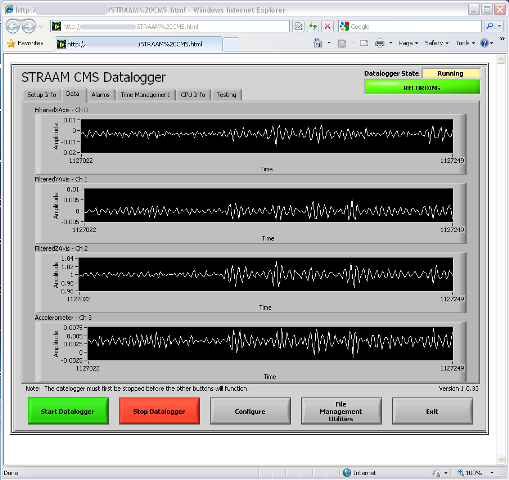

Assessment requires an understanding of the performance of the refrigeration units under many conditions. Zero Zone was taking measurements with a datalogger with too few channels, and no synchronization, to other devices that feed into the system. Plus, they had multiple models of their reach-in refrigerators that needed to be assessed. Furthermore, simplifying the data collection and analysis would make it easier to validate against ASHRAE standards.

Zero Zone came to Viewpoint with the following high-level desires:

- Expand the measurements by adding more channels and channel types (e.g., 4-20 mA, ±10 VDC and digital I/O).

- Provide graphs and KPIs to enable faster analysis of the data during the test.

- Minimize the chance of data loss during long test runs.

- Synchronize data collection and actuation.

- Automate storage of measurements per a user-defined period to eliminate manual start/stop of data collection.

- Simplify the manual configuration setup.

- Enable a way to find relevant data perhaps months or years after the test run.

Solution

Viewpoint developed a monitoring and control durability test system that could exercise Zero Zone’s refrigerators through hundreds of operation cycles over multiple conditions to simulate actual usage in, for example, a grocery store.

During initial conversations, we collaborated closely with Zero Zone to brainstorm on some potential approaches. We made some suggestions that could satisfy their desires while also managing their time and cost budgets. Part of that process involved helping Zero Zone in the selection of the cSeries modules, to assure the most effective modules balancing cost per channel, connectivity, and anticipating some possible future needs.

As an example, by automatically populating the cells in an Excel template based on their original systems’ Excel spreadsheet, we provided streamlined report generation without having to rewrite all the calculation code embedded in their Excel file in another app. The compromises we jointly endorsed were:

- Run an app on a PC to configure and monitor the test.

- Use both NI Compact RIO and Compact DAQ to enable robust and synchronized data collection and control with the ability to expand channels by adding modules in both the cRIO and cDAQ chassis.

- Store data on a local PC rather than a remote server to minimize the probability of data lost during the test run.

- Save configurations into Excel files for recall, and cloning, of prior setups.

- Write measurements automatically into the same Excel file for archive of the test setup and measurements.

- Create, in this same Excel file through cell formulas, the summary report from the summary calculations. This approach allowed flexibility for changes to internal and external test standards.

- Upload the summary data and test reference info into a SQL database for data management and long-term test statistics.

Digital outputs (DOs) were used to control various aspects of the test, such as door open/close and defrost on/off cycles. For flexibility, the user can specify the sequencing of these DO channels, in the Excel file used for the test, with various parameters that define the duty cycle, period, number of cycles, and start delay. The timing of these DO state changes was synchronized to the data acquisition by the real-time loop in the cRIO.

This system was deployed to 6 test bays, each one of which might be testing a unit for as little as a few weeks or as much as a few months.

Benefits

The main goal of this project was to reduce the effort and associated human error in the design and execution of the test run.

Some of the primary benefits for this automated system were:

- Reduced Errors: pre-verified template files used for test configuration and data storage lent consistency to test setup and execution.

- Less Testing Time and Effort: the automatic execution of the test and storage of measurements enabled running tests for multiple days (and nights) without technician interaction. Technicians could work on setting up other units for test rather than babysit the existing test. On average, based on the duration of the test time, testing throughput increased by approximately 25% to 40%.

- Shorter Reporting Time and Effort: reports were available about 85% faster than the time previously spent creating manually. The quicker feedback saved costs through early detection of unit problems and faster teardown at the end.

Some additional major benefits were:

- More details on refrigerator operation: “Wow! We never saw that before.”

- Database consolidation: statistical analysis takes hours not days and includes all tests run in the lab, not just ASHRAE tests. This central database enables long term retrieval of all test data.

- Reuse: techs embraced ability to reuse and modify previous setups.

- Consistency: driving the test definition through an Excel file encouraged uniformity.

- Traceability: documented and timestamped calibration measurements.

- Flexibility: channel counts, acquisition modules configuration, calibration, and calculation formulas were straightforward to change for new test setups.

The test automation provided by this system greatly reduced the labor involved in configuring, running, and analyzing the test run. Furthermore, the customer benefited from the consistency that resulted from the software-enforced process.

System Overview

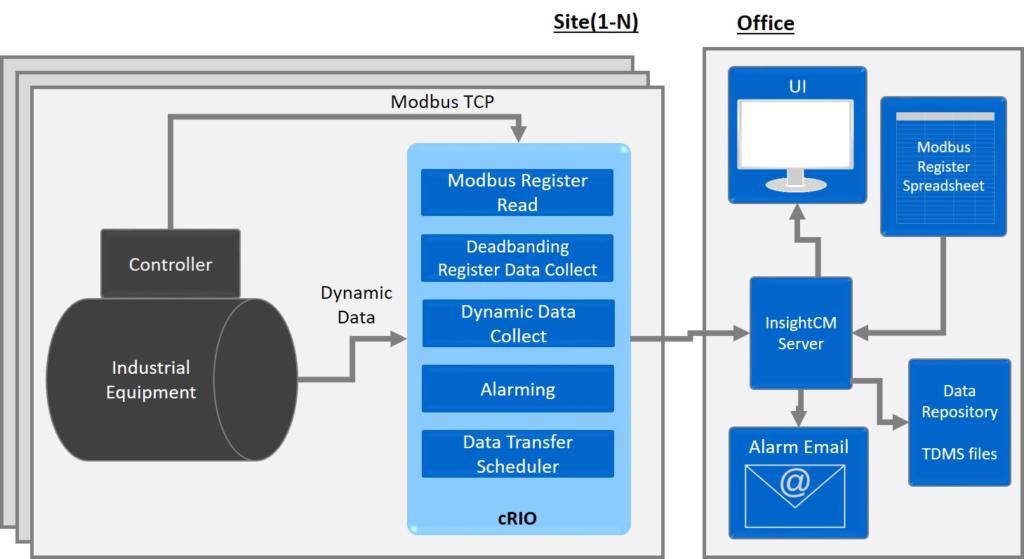

We developed the application in LabVIEW and LabVIEW RT combined with a cRIO connected to a cDAQ via TSN Ethernet.

The data acquisition modules slotted into the cRIO and cDAQ chassis handled the I/O to the customer sensors and actuators. The sensors mostly measured:

- temperature,

- pressure,

- flow,

- power, and

- voltage.

| SOFTWARE FUNCTIONS |

|---|

| Data logging of between 50 and 150 channels and control via digital signals |

| Interface with Excel files for configuration, data logging, and summary calculations |

| SQL database for summary and test setup data |

| Real-time loop for robust operation |

| HARDWARE USED |

|---|

| NI cRIO 8-slot chassis, TSN enabled |

| NI cDAQ 8-slot chassis, TSN enabled |

| Various NI cSeries signal conditioning modules |

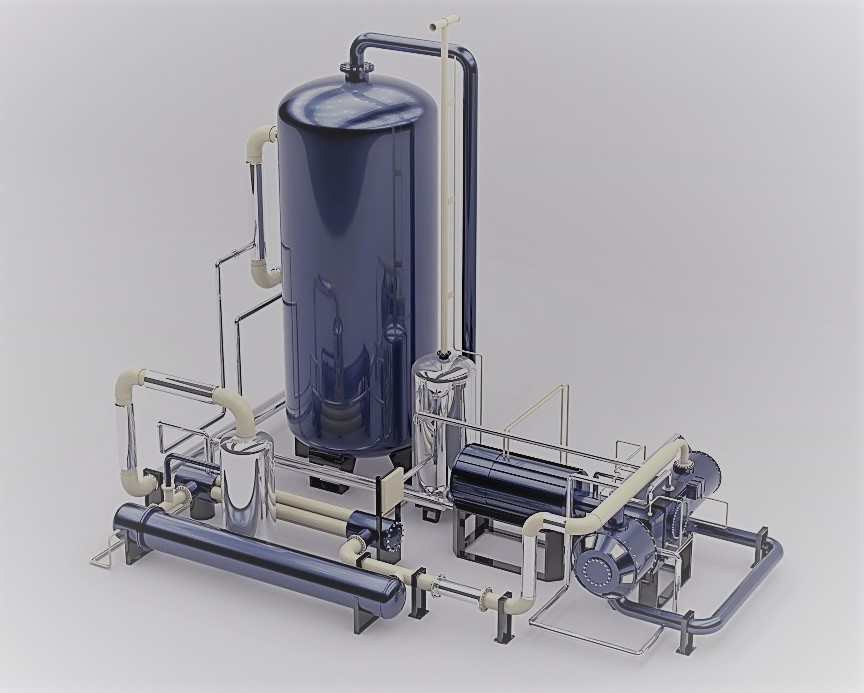

Custom Automated Test System – Characterization of Heat Transfer System Thermal Performance

Custom Automated Test System – Characterization of Heat Transfer System Thermal Performance

R&D testing required flexibility in control schemes and measurement I/O

Client – ATSI, a large-scale System Engineering Provider

Challenge

Our client, ATSI, Inc., headquartered in Amherst NY, designs and builds complex structures and process systems, from industrial construction projects to mechanical systems for power engineering. A previous, long-standing Viewpoint customer that does research and design of thermal energy systems approached ATSI to engage in the build of a specialized test skid that would be used to assess and characterize a heat transfer system. Our long-standing end-customer requested a data acquisition subsystem based on LabVIEW.

Furthermore, the end-customer requested a subsystem that supported flexibility in the data acquisition by channel count and type, since the R&D nature necessitated adaptability. The overall test system needed to automate progress through a sequence of setpoints and ramps.

Solution

ATSI designed the automated control and sequencing with a Modicon PLC. Viewpoint augmented ATSI’s engineering resources to provide the data acquisition subsystem and setpoint sequence editing. This sequence was passed to the PLC for automated sequencing thought the setpoint list. Because our mutual end-customer did not provide explicit design details about the hardware needed for data collection and control, we had flexibility to decide which aspects of these needs would be automated by the Modicon PLC and which by the PC running LabVIEW.

Since Viewpoint had previously developed a similar application for our mutual end-customer, with some of the required data acquisition needs, we chose to leverage and enhance that software platform for this project. That choice drove some of the other designs and defined the scope of work for Viewpoint and ATSI. Specifically, we worked with ATSI to select NI hardware appropriate for sampling all the high-speed data, since the PLC would not be capable, and some of the slower speed data, which needed some specific signal conditioning. Other very-slowly changing process parameters were read from PLC tags.

Some overall design decisions were:

- The LabVIEW application provided data acquisition, test configuration, and operator screens.

- The Modicon-based subsystems provided process control and safety.

- A PLC HMI for process system operation and status as well as control loop tuning.

- NI Compact DAQ (cDAQ) offered flexible PC-based acquisition channels for high sample rate historical data collection.

- A sequence editor on the PC defined the test setpoints, durations, and limits to pass to the PLC for execution.

The test configuration encompassed cDAQ channel configuration, PLC tag configuration, sequence editing, and graphical views on the acquired data. Some channels were acquired at slow rates, e.g., up to about 1 S/s for sensors measuring parameters such as temperature and flow, while others had fast rates, e.g., 1 kS/s to 10s of kS/s for sensors measuring parameters such as transient pressure and vibration. Handling the datafile storage and display of this wide range of data types and rates was important for the end-customer to compare and correlate the effects of changing operating conditions.

Data logging is configured by the sequence editor to occur on certain conditions such as immediately entering a new step, time delayed after entering a step, and activated by the PLC upon reaching stable setpoint control. This flexibility gave the end-customer management of when data collection occurred to ease the comparison and correlation of readings from selected sensors.

After the configuration is completed, the sequence is passed to the PLC. The operator starts the test on the PLC HMI and the PLC automates the test run. Data collected during the test run could be displayed in live graphs during testing, used for verification of setup and operation; post-test in stacked graphs and overlaid plots; and exported for specialized analysis, display, and review.

Benefits

The design of this system was driven largely by the need for flexibility. Sensors and channels could be added, the test sequence could be edited with a variable number of steps with editable execution features, and the data acquisition and storage permitted various rates and logging criteria.

These design choices offered the following advantages:

- As a partner of the team, Viewpoint acted as staff augmentation for ATSI by providing experienced engineers with expert LabVIEW and data acquisition capabilities.

- Flexibility of test sequences, including setpoints and their stabilization criteria.

- Tight integration between the Modicon PLC and LabVIEW-based PC enables critical control and safety to execute reliably and yet adjustably.

- Customized mechanical all-welded skid plugs into end-customer’s test article.

- Setup of data logging including configurable sample rates.

- Ability to add channels by plugging in supplemental DAQmx-based cDAQ modules.

- The LabVIEW application architecture is actor-based for straightforward inclusion of new data sources as needed in the future.

- New data sources are registered with the object-based data aggregator.

- The system handles multiple days of test execution

- The multi-pronged viewer allows verification checks while in setup an operation as well as post-test review.

System Overview

The custom automated test system supplied to the end-customer was a hybrid, made up of PC-based and PLC-based components coupled with the fluid-handling components on the skid. The hardware listed below includes only the data acquisition, control, and safety items, and only a high-level description of the mechanical aspects.

| SOFTWARE FUNCTIONS |

|---|

| NI LabVIEW for Windows [Viewpoint] |

| NI LabVIEW Modbus driver [Viewpoint] |

| NI DAQmx hardware drivers [Viewpoint] |

| Actor-based object-oriented LabVIEW application for the PC [Viewpoint] |

| Modicon Concept software [ATSI] |

| Blue Open Studio HMI software [ATSI] |

| Function Block Programming for the PLC [ATSI] |

| HARDWARE USED |

|---|

| Modicon PLC and modules for pressure, temperature, flow and other process variables |

| NI Compact DAQ chassis and modules, e.g., 4-20 mA, RTD, thermocouple, thermistor, and 200 kS/s analog input |

| 600 VDC Power supplies |

| Components to flow fluid, including pumps, valves, pressure regulators |

| Intel Tower PC for LabVIEW |

| Mini Industrial PC for HMI |

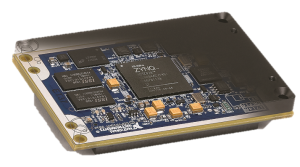

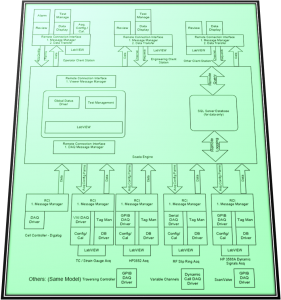

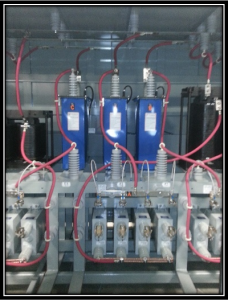

Replacing Wire-wrap Boards with Software, FPGAs, and Custom Signal Conditioning

Replacing Wire-wrap Boards with Software, FPGAs, and Custom Signal Conditioning

Electronic components of fielded systems were aging out

Reverse engineering effort converted wire wrap boards to FPGA-based I/O

Client – Amentum – A supplier for Military Range System Support

Challenge

Amentum (www.amentum.com) supports a decades-old system deployed in the early 1980s. While the mechanical subsystems were still functioning, the wire-wrapped discrete logic and analog circuitry was having intermittent problems.

Systems designed and built decades ago can sometimes have wonderful documentation packets. Nevertheless, we’ve been burned too often when the docs don’t incorporate the latest redlines, last-minute changes, or other updates.

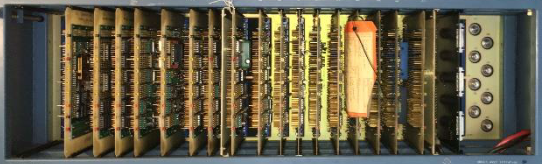

The replacement system needed to be a form-fit-function replacement to land in the same mounting locations as the original equipment with the same behavior and connections. Below is an image of the existing wire-wrap boards and their enclosure. We had to fit the new equipment in this same spot.

Figure 1 – Original wire-wrap boards

Finally, Amentum wanted to work with Viewpoint in a joint development approach. While our joint capabilities looked complementary, we didn’t know at the start how well we would mesh with our technical expertise and work culture – it turns out we worked extremely well together as a team and neither one alone could have easily delivered the solution.

Solution

Since the team treated the existing documentation package with suspicion, we adopted a “trust but verify” approach. We would use the documents to give overall direction, but we would need details from the signals to verify operation.

Leveraging Amentum’s experience with the fielded systems, the team decided early on to record actual signals to understand the real I/O behavior. We used the system’s “test verification” unit to run the system through some check out procedures normally run prior to system usage. This verification unit enabled us to use a logic analyzer for the I/O to and from the discrete logic digital signals and an oscilloscope and DMM for the analog signals. The available schematics were reviewed to assure that the signals made sense.

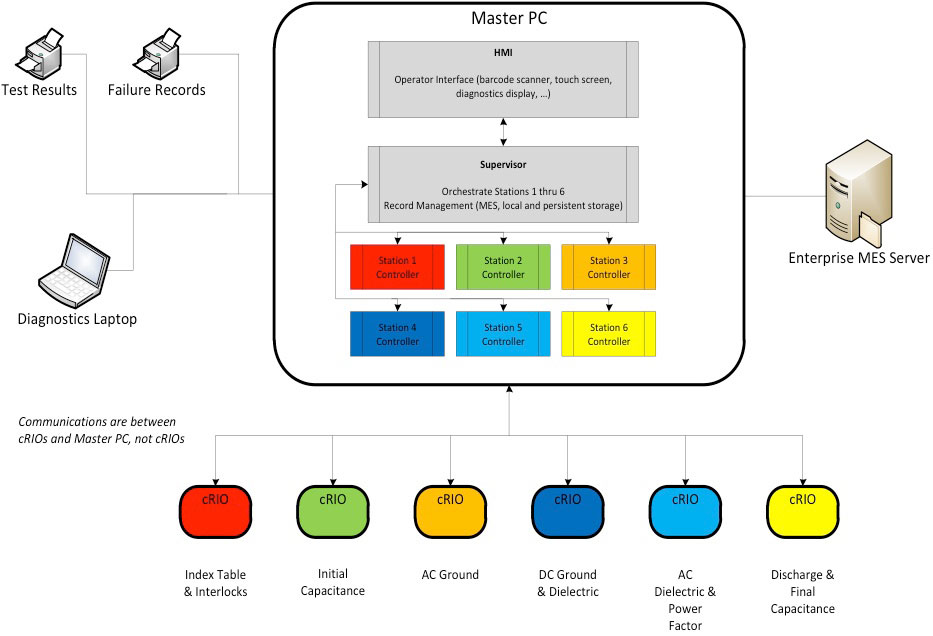

With a trustable understanding of system operation, Amentum created a requirements document. We jointly worked on the design of the new system. There were both an “inside” system (in a control shelter) and an “outside” system (in the unit’s pedestal).

Some overall tasks were:

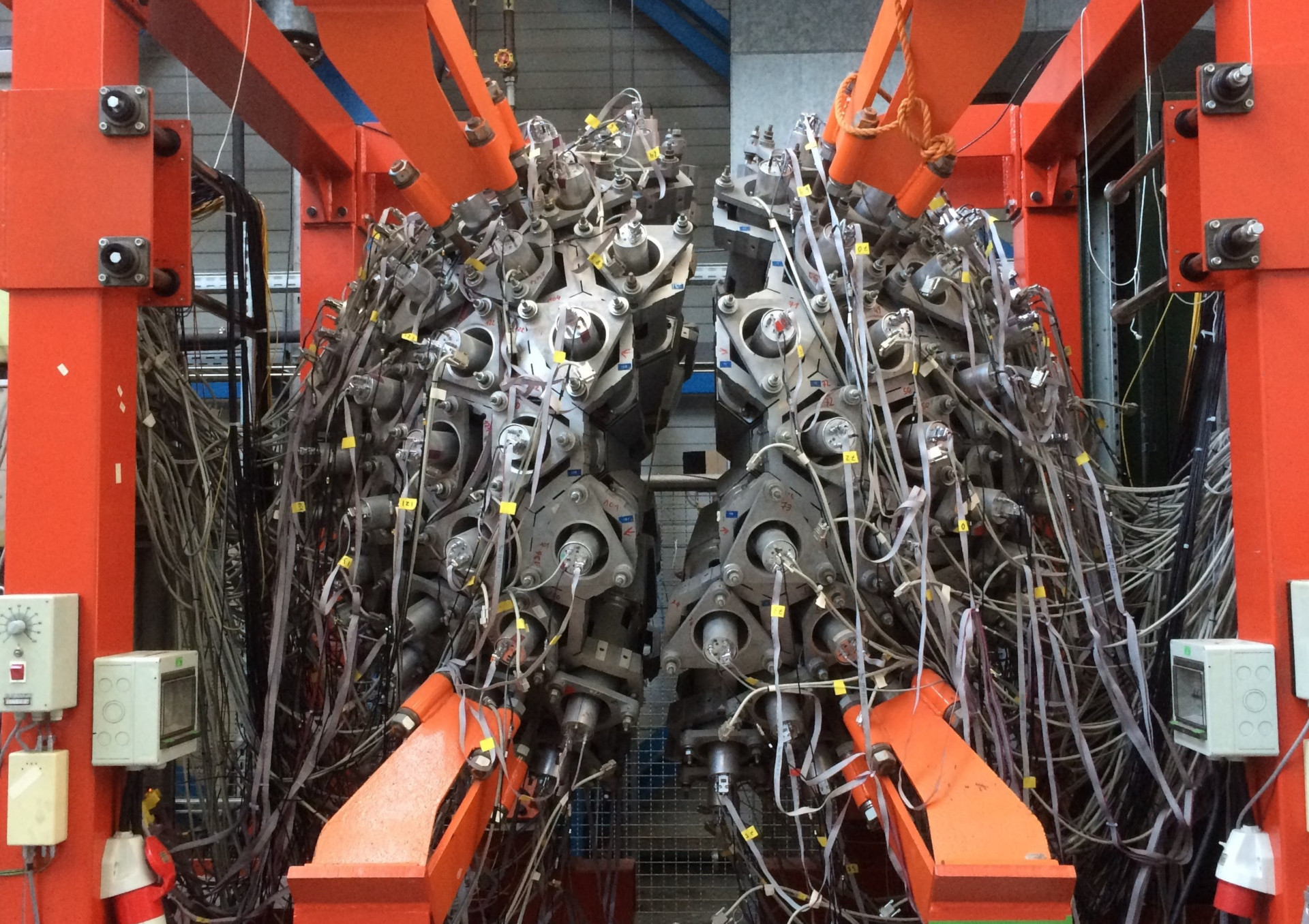

- Viewpoint recommended an architecture for the inside application running on PXIe LabVIEW RT and FPGA layers.

- Amentum created the system control software on a Linux PC.

- Viewpoint developed the more intricate parts of the inside application and mentored Amentum on other parts they developed. This work recreated the existing discrete logic and analog I/O using PXIe NI FPGA boards.

- Viewpoint designed custom interposer boards to connect harnesses to the NI PXIe equipment, including a test point and backplane boards.

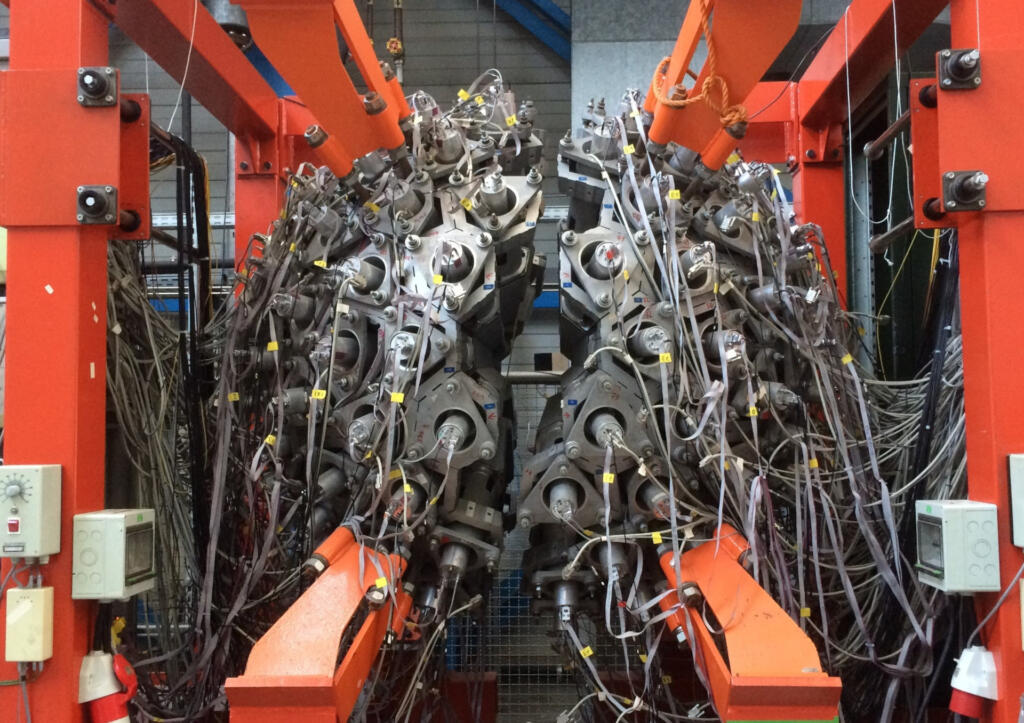

- Amentum designed and developed the cRIO-based outside system application and Viewpoint created a set of custom interposer boards to connect harnesses to the cSeries modules.

Critical to this project was our hardware selection services. By combining our engineering skills for the reverse engineering of the legacy electronics with our knowledge of the NI hardware, we narrowed the hardware choices to a few NI FPGA boards.

These PXIe FPGA boards and cRIO FPGA-backed modules handled the required 60 MHz clock-derived signals with correct phases, polarity, analog inputs, and so on. Furthermore, the wire-wrap boards were register-based so the PXIe had to decode “bus signals” sent over a Thunderbolt bus to emulate the programming and readouts from the various wire-wrap boards.

Figure 2 – PXIe replacement to wire-wrap boards

Amentum wanted to be able to support the LabVIEW FPGA VIs used to replace the functionality of the discrete logic. So, Viewpoint acted as mentor and code reviewer with Amentum to ramp them up on using LabVIEW FPGA effectively. Neither one of us alone would have been successful coding the applications in the allotted time. Joint knowledge and experience from both Viewpoint and Amentum were required.

Signal conditioning and harnesses needed to be reworked or replaced as well, of course, since the landing points for the wires were different in the new system. Viewpoint suggested a technique, which we’ve used frequently in past obsolescence upgrade projects, to create PCB boards that accepted existing connectors.

For the cRIO, these interposer “connection” PCBs plugged directly into the cRIO cSeries module. For the PXIe, these interposer PCBs accepted the field wiring connectors and converted them to COTS cables that connected to the PXIe modules. These interposer PCBs could have signal conditioning incorporated as needed. This approach significantly reduced the need for custom harnesses. All told, about 200 signals were passed between the PXIe and various other subsystems, and about 100 for the cRIO. This approach saved significant wiring labor and cost.

Figure 3 – cRIO with interposer boards between cSeries and field harnesses

The work to design and build the signal conditioning custom electronics was split between Viewpoint and Amentum. Viewpoint did more design than build and handed over the schematics and Gerber files to Amentum so they could manage the builds while also being able to make modifications to the boards as needed.

Benefits

Amentum wanted an engineering firm that was willing to work along side them as a partner. Joint discussions about architecture and design led to a collaborative development effort where Amentum benefited from Viewpoint’s extensive expertise and guidance on LabVIEW architectural implementation and FPGA coding style.

The main outcomes were:

- As a partner of the team, Viewpoint acted as staff augmentation by providing experienced engineers with technical capabilities that Amentum initially lacked.

- This team approach delivered a stronger product to the end-customer more quickly than either of us could do alone.

- The combination of Viewpoint’s and Amentum’s experience reduced the amount of reverse engineering needed due to the lack of firm requirements.

- Reduction of electronics obsolescence by using software-centric FPGA-based functionality. Recompiled LabVIEW FPGA could target future boards models.

- Increased software-based functionality simplifies future updates and modifications.

- Decrease in number of parts leading to simpler maintenance.

- Lower wattage consumed eliminated need for an anticipated HVAC upgrade.

- Cybersecurity concerns were reduced by using Linux-based systems and FPGA coding.

System Overview

Using software to emulate the old hardware was a critical success factor. Since the requirements were not 100% solid at the start of the project, some field-testing was required for final verification and validation. The flexibility of the software approach eased modifications and tweaks as development progressed. A hardware-only solution would have necessitated difficult and costly changes. For example, some of the changes occurred very near the final deployment after the system was finally connected to an actual unit in the field.

| SOFTWARE FUNCTIONS |

|---|

| Emulate original discrete logic functions via FPGAs |

| Emulate original analog signal I/O |

| Overall system control via Linux PC |

| Maintain the same user experience as existed before |

| Modern application architecture for simpler maintenance |

| HARDWARE USED |

|---|

| NI cRIO chassis with various cSeries modules |

| NI PXIe chassis with FPGA modules to handle all the analog and digital I/O via a combination of multifunction and digital-only cards |

| Custom PCBs for signal conditioning and connectivity |

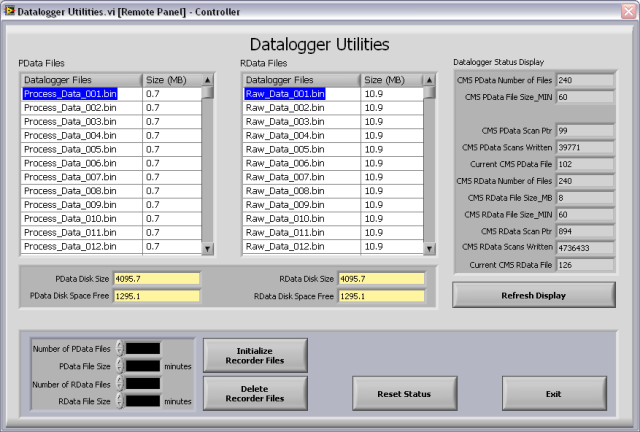

Enhanced Portable Data Acquisition and Data Storage System

Enhanced Portable Data Acquisition and Data Storage System

Using a Real-Time Operating System (RTOS) provides a high level of synchronization and determinism for acquired data.

Client

Tier 1 Automotive Design and Manufacturing Supplier

Challenge

Our client had an existing data acquisition system, used for mechanical product validation testing, that had undergone many updates and patches for over 15 years. These updates and patches, performed by multiple developers, had rendered the software portion of the system somewhat unstable. Furthermore, the system hardware was based on NI SCXI, which was becoming obsolete. These issues prompted our client to migrate to an entirely new system.

New requirements for this upgrade included utilizing a PXI controller running NI Linux Real-Time, a RTOS, executing a LabVIEW RT application. Selection of the replacement NI hardware required knowledgeable engineering to assure compatibility between the selected NI hardware and the legacy SCXI equipment. Part of that compatibility assessment included an understanding of the software application so we could know for certain how the SCXI equipment was used. A blind selection based entirely on hardware specs would have missed nuance, such as the need for synchronized acquisition.

The data acquisition software had to support a variable mix of signal conditioning modules in the PXI chassis. In addition, the data acquired from these signal conditioning modules needed to be synchronized within microseconds.

Solution

Viewpoint leveraged another application, developed for the client a few years prior, to harmonize the user interface and to reduce development effort. Most of the development time focused on support and configuration of the multiple module types and ensuring that the data synchronization functioned as required. The result was an ultra-flexible, portable, high-speed data acquisition software/hardware combination that can be used to acquire time-sensitive, synchronized data across multiple modules in a PXI chassis running a real-time operating system.

Benefits

The upgraded system offers the following features:

- Highly configurable real-time data acquisition hardware/software solution based on LabVIEW RT and PXI hardware. Our client works closely with OEMs to assure compatibility and durability with their products, often going to the OEM’s test cells to collect performance data. The configurability in modules and channels affords the fastest possible setup at the OEM’s site which minimizes time and cost in the test cell.

- Configuration files stored in a SQL database format. Saving channel and module setups in SQL allows the test engineer to locate previous hardware and data acquisition configurations. The usual alternative is a bulk save of an entire system setup rather than using a more granular, and hence, more flexible approach afforded by using the database.

- Immediate test feedback through graphs and analog indicators, used to assure data quality before leaving the test cell.

- Data playback features after the data has been acquired, used for in-depth review of data after leaving the test cell.

- Data acquisition on the RTOS provides assurance that the acquisition will not be interrupted by network or other OS activities, which was occasionally an issue with the prior Windows-based application.

- Synchronization between signal conditioning modules ensures time-critical data taken on separate modules can be compared and analyzed.

System Overview

The system consisted of custom LabVIEW RT software intended to run on an engineer’s laptop and the PXI real-time controller and a PXI chassis populated with a flexible assortment of NI signal conditioning modules (provided by the client).

The software used an object-oriented Actor-based architecture, which facilitates adding new signal conditioning modules and flexible communications between the host PC and the real-time controller.

| SOFTWARE FUNCTIONS |

|---|

| DAQ Task Configuration |

| Event-Based DAQ Trigger |

| Data Synchronization |

| Real-Time Data Visualization |

| Data File Playback Utility |

| Datalogging to TDMS File |

| HARDWARE USED |

|---|

| PXIe Chassis (4,9 or 18-Slot) |

| PXI Real-Time Controller |

| PXI Multifunction I/O Module |

| PXI Digital I/O Module |

| PXI Counter/Timer Module |

| PXI Thermocouple Module |

| PXI DSA Module |

| PXI LVDT Module |

| PXI High-Speed Bridge Module |

| PXI Voltage Input Module |

Industrial Monitoring for a Harsh Environment

Industrial Monitoring for a Harsh Environment

Developing an industrial monitoring system for ultrasound-based sensing in a harsh environment

Client – Energy Research Lab

Challenge

Our client was experiencing problems making temperature measurements in a hostile, irradiated environment. Traditional temperature sensors don’t last long in this environment, so our client was developing a sensor designed for these conditions.

Special equipment is required to drive this sensor. It’s an active sensor requiring an ultrasound pulser/receiver (P/R) and high-speed digitizer to make it function.